Likelihood Ratio Test for Publication Bias

Likelihood Ratio Test for Publication Bias — a statistical method to detect and quantify publication bias in heterogeneous datasets.

#statstab #469 We should focus on the biases that matter

Thoughts: I recommend this entire issue with the discussions and reply. They are a great insight into how science develops.

#metascience #bias #publicationbias #QRPs #TES

http://dx.doi.org/10.1016/j.jmp.2013.06.001

#Kolumne

„Ich habe nicht versagt – ich habe nur 10.000 Wege gefunden, die nicht funktionieren.“

Schon T. A. Edison wusste: Auch negative Ergebnisse bringen Erkenntnis. Warum aber tun sich Forschende bis heute schwer damit, Nullresultate zu veröffentlichen? Eine neue Umfrage von Springer Nature zeigt: Zwischen Einsicht und Handeln klafft eine erstaunlich große Lücke. Mehr dazu von Ralf Neumann: https://www.laborjournal.de/editorials/3396.php

#Laborjournal #Lifesciences #Forschung #NullResults #PublicationBias

Hi #AcademicChatter, what is a good paper to cite that highlights the publication bias for positive findings (at least in #Neuroscience or #Psychology, I don't know how widespread the problem is)?

#PublicationBias #Research #Academia

Assumption Checking Prevents Assumption Hacking in Multiverse Meta-Analyses - Replicability-Index

In a nutshell Statistical methods for meta-analyses make different assumptions. For this reason, different methods can produce different results with the same data. Meta-analysts often struggle to make sense of these inconsistent results. A simple solution to this problem is to test testable assumptions. Key assumptions that influence results are publication bias and heterogeneity of

#statstab #351 Revisiting the replication crisis without false positives

Thoughts: Improving how we do things requires a careful examination of what the problems are/n't.

#replication #critical #publicationbias #crisis #metascience

https://osf.io/preprints/socarxiv/rkyf7_v1

In the light of a recent article (https://doi.org/10.1186/s12915-024-02101-x), showing substantial heterogeneity among analysts.

We tested the robustness of our findings to several analytical decisions. Our results were consistent across.

Also, though evidence for small-study & decline effects is widespread (https://doi.org/10.1186/s12915-022-01485-y), we did NOT find clear evidence of #publicationbias in our dataset. Presumably thanks to our efforts to obtain nonreported results directly from authors.

📰 https://doi.org/10.1111/ele.70100

Same data, different analysts: variation in effect sizes due to analytical decisions in ecology and evolutionary biology - BMC Biology

Although variation in effect sizes and predicted values among studies of similar phenomena is inevitable, such variation far exceeds what might be produced by sampling error alone. One possible explanation for variation among results is differences among researchers in the decisions they make regarding statistical analyses. A growing array of studies has explored this analytical variability in different fields and has found substantial variability among results despite analysts having the same data and research question. Many of these studies have been in the social sciences, but one small “many analyst” study found similar variability in ecology. We expanded the scope of this prior work by implementing a large-scale empirical exploration of the variation in effect sizes and model predictions generated by the analytical decisions of different researchers in ecology and evolutionary biology. We used two unpublished datasets, one from evolutionary ecology (blue tit, Cyanistes caeruleus, to compare sibling number and nestling growth) and one from conservation ecology (Eucalyptus, to compare grass cover and tree seedling recruitment). The project leaders recruited 174 analyst teams, comprising 246 analysts, to investigate the answers to prespecified research questions. Analyses conducted by these teams yielded 141 usable effects (compatible with our meta-analyses and with all necessary information provided) for the blue tit dataset, and 85 usable effects for the Eucalyptus dataset. We found substantial heterogeneity among results for both datasets, although the patterns of variation differed between them. For the blue tit analyses, the average effect was convincingly negative, with less growth for nestlings living with more siblings, but there was near continuous variation in effect size from large negative effects to effects near zero, and even effects crossing the traditional threshold of statistical significance in the opposite direction. In contrast, the average relationship between grass cover and Eucalyptus seedling number was only slightly negative and not convincingly different from zero, and most effects ranged from weakly negative to weakly positive, with about a third of effects crossing the traditional threshold of significance in one direction or the other. However, there were also several striking outliers in the Eucalyptus dataset, with effects far from zero. For both datasets, we found substantial variation in the variable selection and random effects structures among analyses, as well as in the ratings of the analytical methods by peer reviewers, but we found no strong relationship between any of these and deviation from the meta-analytic mean. In other words, analyses with results that were far from the mean were no more or less likely to have dissimilar variable sets, use random effects in their models, or receive poor peer reviews than those analyses that found results that were close to the mean. The existence of substantial variability among analysis outcomes raises important questions about how ecologists and evolutionary biologists should interpret published results, and how they should conduct analyses in the future.

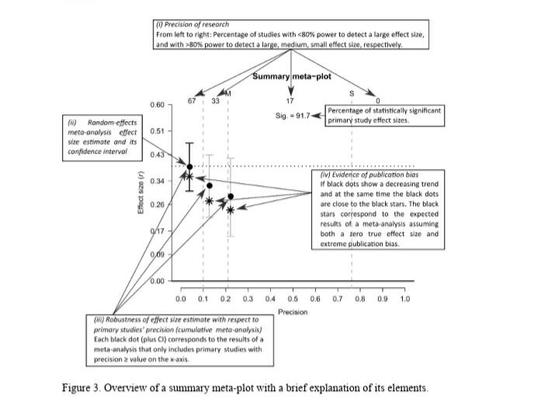

#statstab #277 The meta-plot: A graphical tool for interpreting the results of a meta-analysis

Thoughts: Most meta-analysis plots are confusing. Maybe this one'll resonate with readers.

#metaanalysis #dataviz #plots #visualisation #publicationbias #bias

https://osf.io/preprints/psyarxiv/cwhnq_v1

I was surprised by which #teaching and #studying methods seemed ineffective when tested.

After reviewing some #education #research in my library, I listed seemingly ineffective and seemingly effective techniques, and then explained how trendy techniques can become overrated by #publicationBias and #conflictsOfInterest.

Parents, educators, and students may be surprised by my latest at #PsychologyToday:

https://www.psychologytoday.com/us/blog/upon-reflection/202404/which-trendy-teaching-techniques-actually-work

#higherEd #edu #parenting #psychology #decisionScience #cogSci #BTS

Which Trendy Teaching Techniques Actually Work?

How many common teaching techniques have actually been tested? Of those that have been tested, what were the results? The answers may surprise you. They surprised this educator!

A small rant about zombie ideas and the tendency to keep looking for modifications of study methods to avoid concluding that a null result is really null.

http://deevybee.blogspot.com/2024/03/just-make-it-stop-when-will-we-say-that.html#research #nullresults #laterality #handedness #publicationbias

Just make it stop! When will we say that further research isn't needed?

I have a lifelong interest in laterality, which is a passion that few people share. Accordingly, I am grateful to René Westerhausen who ...