The Consciousness Trilogy: Reading Three Wagers on the Question We Cannot Settle

This page exists for readers who want a map of the consciousness sequence published on BolesBlogs in the spring of 2026. Three articles, taken together, cover the contemporary terrain on the deepest question philosophy still asks. Each can be read alone. Read in sequence, they form a coordinated treatment of the consciousness problem that points beyond any single solution toward what the field as a whole has and has not accomplished.

The problem itself is older than philosophy as a discipline. We know that we are conscious because we are reading these words and something is happening as we read them. We extend that knowledge to other people, to animals, and possibly to stones, on grounds that work in practice while collapsing in theory. David Chalmers named the difficulty the hard problem of consciousness in his 1995 paper “Facing Up to the Problem of Consciousness,” and the difficulty has not been resolved in the thirty-one years since. Why does any arrangement of physical stuff feel like something from the inside? Why does any neural configuration produce the experience of redness, sourness, dread, or hope? No materialist account has explained this convincingly, and the standard moves to dissolve the question have either denied that consciousness exists in the way we ordinarily mean (illusionism), extended consciousness to every level of organization (panpsychism), or made consciousness the only substrate with matter as its appearance (analytic idealism). The three articles treat each of these alternatives in turn, by way of its strongest contemporary defender.

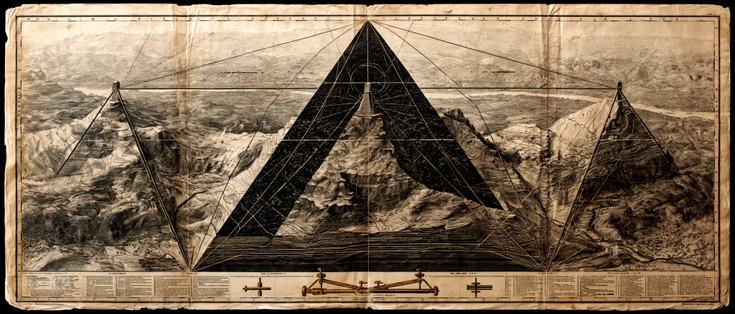

The first article, The Inwardness of Things: McGilchrist, Panpsychism, and the Question We Cannot Settle, takes up Iain McGilchrist’s 2021 book The Matter With Things and his proposal that matter is one phase of consciousness rather than its source, the way ice and vapor are phases of water. It evaluates his case, with credit for what works and pressure on what fails, and concludes that panpsychism remains a serious option whose central difficulty (the combination problem of how micro-experiences merge into macro-experiences) has not been adequately addressed in McGilchrist’s work or in the panpsychist tradition more broadly.

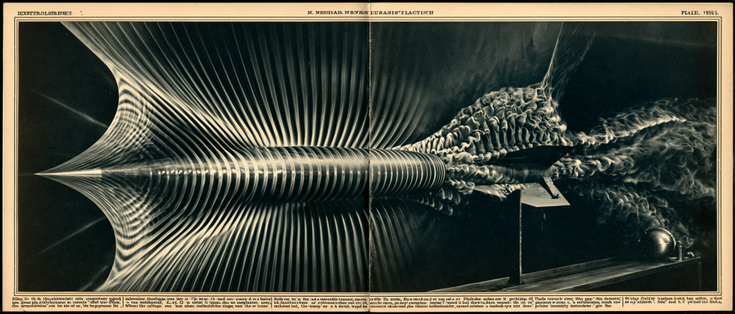

The second article, Consciousness Explained Away: Daniel Dennett’s Illusionism and the Theory That Spends Its Own Foundation, considers Dennett’s lifelong project of arguing that phenomenal consciousness as ordinarily conceived does not exist. It gives Dennett full credit for his demolition of the Cartesian Theater and his contributions to cognitive science, while showing why the central illusionist claim (that consciousness is a user illusion the brain stages for itself) collapses on close inspection because illusions presuppose conscious subjects to whom they appear. Written in the wake of Dennett’s death in April 2024, the piece tries to argue with him at the level of seriousness his work always demanded.

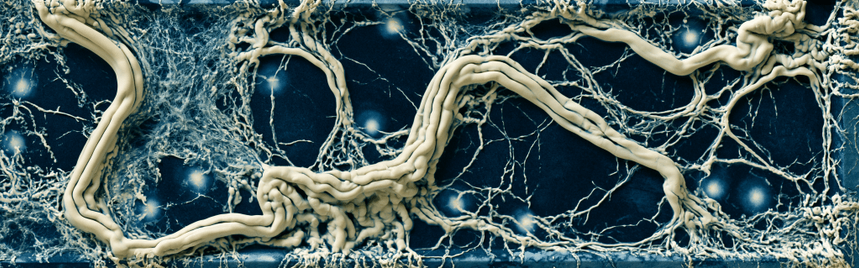

The third article, The Dissociated Universe: Bernardo Kastrup’s Analytic Idealism and the Mind That Contains the World, examines Bernardo Kastrup’s claim that reality is mental at base, with individual minds being dissociated alters of universal consciousness, comparable to the alternate personalities that appear in cases of Dissociative Identity Disorder. It presents Kastrup’s strongest moves, including the empirical work on psychedelics, NDEs, and quantum measurement, and tests them against the difficulties his position inherits, including the decombination problem, the contested status of DID as a clinical category, and the challenge of accounting for the resistance the world offers to subjective will. It closes by drawing the three positions together and showing what the trilogy as a whole accomplishes.

Several reading paths are available, depending on what the reader brings to the sequence.

A reader new to philosophy of mind should start with the first article. McGilchrist provides the easiest entry into the territory because his prose is generous and his analogies accessible, and the article’s analysis demonstrates the analytical method that the next two articles will apply to harder cases. Read the second article next for the materialist counter-position, then the third for the closing turn that completes the triangulation.

Readers already familiar with the consciousness debate can take the articles in any order, since each contains a self-contained treatment of its primary subject. The third article’s closing section synthesizes all three positions and may serve as a useful entry point for the impatient reader, who can then proceed to whichever individual article most interests her.

Skeptics of the entire enterprise should start with the second article. Dennett offers the most aggressive case against making the consciousness question a serious metaphysical issue, and the article’s evaluation of why his case nonetheless fails will give the skeptical reader a more accurate sense of why the question persists than any defense of consciousness as fundamental could provide.

Readers of theological or contemplative orientation will find the third article most directly engaged with positions that have been held in non-Western contemplative traditions for thousands of years. Kastrup himself acknowledges the affinity between analytic idealism and Advaita Vedanta, and the article’s treatment of his arguments may help such readers see how a contemporary philosopher with two doctorates and a CERN background defends positions that might otherwise be dismissed as mystical.

What the trilogy as a whole accomplishes is mapping the contemporary terrain in enough detail that a reader can see why the consciousness problem remains genuinely open after three centuries of modern philosophy and two and a half millennia of pre-modern reflection. None of the three thinkers has solved the problem. Each has identified real difficulties in the others. The honest verdict is that the consciousness question may not be solvable by argument alone, and that the next generation of work in this area will need to go beyond the choice among materialism, panpsychism, illusionism, and idealism, and find some way of asking the question that the current frame cannot accommodate.

That said, the trilogy demonstrates what philosophy at its best can do. The standard runs through every article: identify what works, press what fails, name what survives. The discipline involves refusing to settle prematurely and refusing to mystify when settling becomes impossible. Readers who follow the sequence to its end will walk away with sharper questions and fewer false certainties than when they began, which is what serious reading is supposed to do.

A note on the wager metaphor used throughout the trilogy. Each of the three thinkers placed a bet about what consciousness is and what it requires. McGilchrist bet that consciousness reaches all the way down into matter as one of its phases. Dennett bet that consciousness as ordinarily conceived does not exist and that the appearance of inwardness is a user illusion the brain stages for itself. Kastrup bet that consciousness is the only thing there is and that matter is its appearance under conditions of dissociation. Each wager was placed honorably and pursued with rigor. None has paid off in the sense the bettor intended. All three have produced philosophical work that will outlast the lifetimes of those who placed the bets, which is the most honest verdict serious reading can deliver about serious thinkers who have committed themselves to questions that exceed what any single mind can resolve.

The articles run between two thousand seven hundred and three thousand four hundred words each. Each was written for a university-educated audience that respects the difficulty of the question and is willing to follow careful argument to its conclusions. Each is available in its original markdown format. The position taken throughout the trilogy is that getting the question right matters more than choosing a winner among the available answers, and that the best service we can render to the question is to pass it forward in better condition than we found it.

The three articles, in order:

ARTICLE ONE The Inwardness of Things: McGilchrist, Panpsychism, and the Question We Cannot Settle

ARTICLE TWO Consciousness Explained Away: Daniel Dennett’s Illusionism and the Theory That Spends Its Own Foundation

ARTICLE THREE The Dissociated Universe: Bernardo Kastrup’s Analytic Idealism and the Mind That Contains the World

Read alone, each article offers a treatment of its primary subject that does not depend on the others. Read together, the three form a synoptic account of where the contemporary consciousness debate stands and why the answers currently available leave the question genuinely open. The reader who completes the sequence will know more about the topography of the problem than most working philosophers do, and will be in a position to evaluate future contributions to the debate with the analytical tools the trilogy has put in place.

That is what philosophy at its best can offer. The trilogy is offered in that spirit.

#bolesblogs #clinical #consciousness #dennett #inwardness #kastrup #knowing #mcgilchrist #memory #panpsychism #philosophy #physics #trilogy