"ZFS with a striped configuration can be more space-efficient compared to other setups like RAIDZ, as it does not require additional space for parity. However, the actual usable space will depend on the number of disks and the specific configuration used."

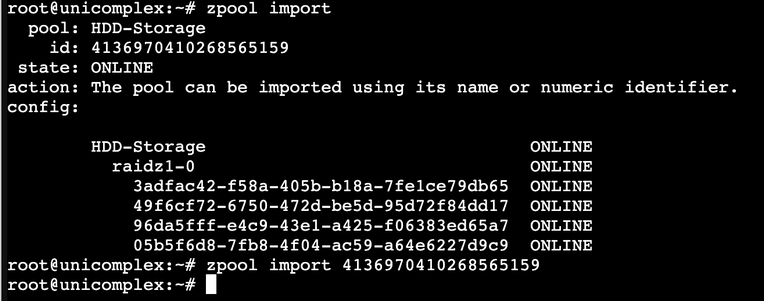

With this in mind, I started to migrate data from striped #zpool to #Raidz1

There was around 10TB of data to move. But after moving all that data, it took around 20TB on a two-drive raidz1. Some #BlackMagicFuckery