One ZAP entry. One uint64_t in spa_t. One new CLI flag.

That's all it took to teach #OpenZFS to skip blocks the last scrub already verified.

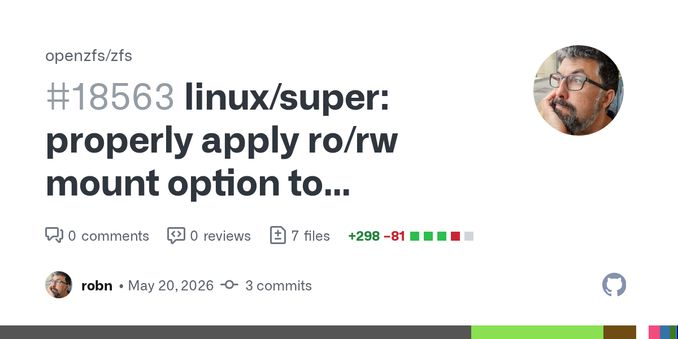

`zpool scrub -C` ships in 2.4.0.

Don't Make ZFS Re-Read What It Just Read

I have already mentioned one of my improvements to scrub in an earlier post, Teaching ZFS about time. In that post I also claimed that scrub can be expensive, and that we were looking for ways to limit the amount of data we actually want to scrub. A scrub on a multi-petabyte pool can run for days, and some of us want a smaller, easier check that everything is still going right.