Striped poll consists of two drives as well. At the end, I should have #Raidz1 poll with 4 drives. Finally, having the same size.

"ZFS with a striped configuration can be more space-efficient compared to other setups like RAIDZ, as it does not require additional space for parity. However, the actual usable space will depend on the number of disks and the specific configuration used."

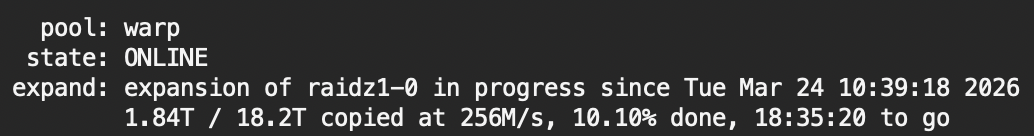

With this in mind, I started to migrate data from striped #zpool to #Raidz1

There was around 10TB of data to move. But after moving all that data, it took around 20TB on a two-drive raidz1. Some #BlackMagicFuckery

Started #zpool shinannigans. I'm switching from RAIDZ1 to Stripe storage type and want to remove two old, smaller drives. At the end, it should be one of those:

1. Same storage size, less power consumption, colder NAS, faster storage, and two empty drive bays.

2. Lost data, fire, and explosions.

Migrating a ZFS pool from RAIDZ1 to RAIDZ2

#petermarbaise #tuxoche #openmedaivault #zfs #raidz1 #pool #migration

http://tuxo.li/1mj

Horror story in two lines:

```

Device: /dev/ada0, 24 Currently unreadable (pending) sectors.

Device: /dev/ada0, 24 Offline uncorrectable sectors.

```

Y'all, if you have a #fileserver, always have a parity disk in the #raid volume. ALWAYS. They have saved my ass so many times. And, for the love of all that is righteous, make offline backups of your precious volumes.

Today is being spent on making my #TrueNAS server healthy again.

Next steps int selfhosting: getting needed hardware

I had a few 2nd-hand shops saved-searches in place for RAM modules, finally happened to order the 8+8GB RAM modules for #dell #wyse5070 for cheap price

#nvme to #usb3 adapters have been ordered too for the #raidz1 setup I intend to create (disks still not bought, tho)

And getting quite convinced for #freebsd on #zfs and #bastillebsd for running the services

First time in the BSD world, I am coming! :D

One of the ways I help avoid failures with raidz1 is by replacing HDD when they get to around 5 years old, or they start showing errors. Also, monthly scrubs helps ensure that the drives are getting fully utilized regularly which helps keep things healthy/accelerate detection of failures.

For whatever reason, #resilver on my #FreeBSD server slowed down continuously, an initial ETA showed around 4 hours, by the time I left my desktop, it already showed around 10 hours. But hey, it finished without errors, so, all fine 😅

BTW, I really don't get why recently, you read a lot of stuff about how #RAID-5 (or the #ZFS equivalent #raidz1) was super dangerous and you should never use it 🫨

What's certainly true is: With larger pools and larger individual disks, the risk of a second disk failure during resilver significantly increases. But then, there's no "risk-free" storage, so a #backup is always a *must*.

What's also true is, raid-5/raidz1 is still the rendundancy scheme with the least storage overhead for most scenarios (3 and more disks). And of course it still reduces the risk of a failed pool. This pool here has only one of its original disks left, I didn't need my backup so far. 🤷

So please move the discussion of RAID back to a sensible base. The scheme/level you choose is always a trade-off between the cost (overhead) and the amount of risk reduction, and that's pretty much all there is....