Sebastian Raschka (@rasbt)

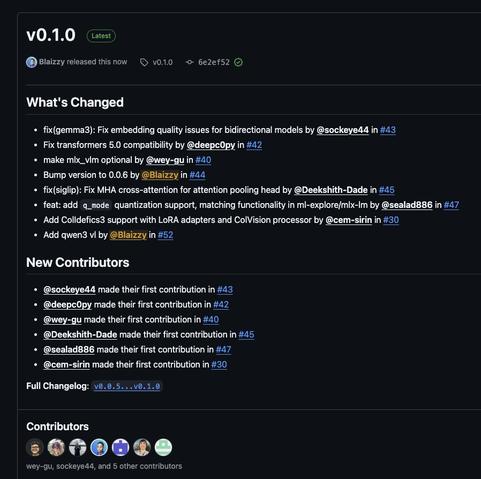

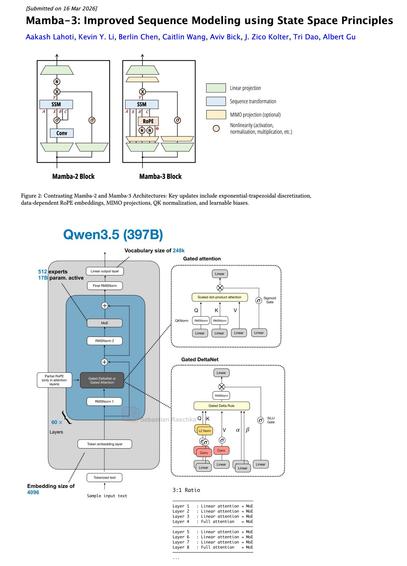

Mamba-3이 출시되었으며, 작성자는 Mamba 및 유사 모델들이 트랜스포머 어텐션 하이브리드 아키텍처(Qwen3.5, Kimi Linear 등)에서 흥미로운 활용처라고 평가합니다. 다음 세대 하이브리드에서 Gated DeltaNet 대신 RoPE가 추가된 Mamba-3을 교체해보는 실험을 제안하고 있습니다.

Sebastian Raschka (@rasbt) on X

Oh wow, Mamba-3 is here! For me, the most interesting use case of Mamba and Mamba-likes are the recent transformer attention hybrid architectures (Qwen3.5, Kimi Linear, etc.) Would be interesting to swap Gated DeltaNet with Mamba-3 (which now also has RoPE) in next gen hybrids.