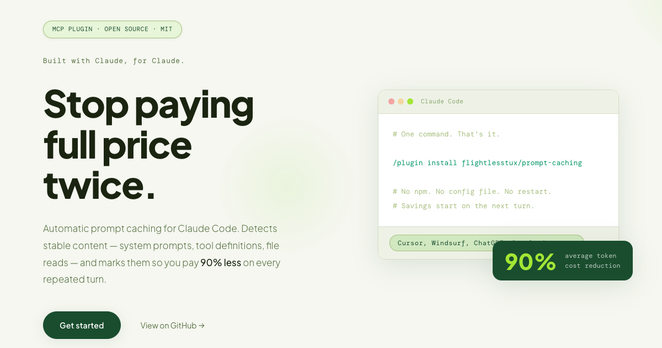

I added prompt caching to my Anthropic Batch API workflow. The hit rate was 0%.

Each model has a minimum cacheable token count — 4,096 for Haiku 4.5. If your cache_control block is below that, the API silently ignores it. Successful response, zero cache reads, no warning.

My IAB taxonomy prompt was 1,064 tokens. Well under the threshold.

Full write-up:

Qiita - 人気の記事

Qiita - 人気の記事