#ArtificialIntelligence #Artificalintelligence #Largelanguagemodels #Statistics

#ArtificialIntelligence #Artificalintelligence #Largelanguagemodels #Statistics

Frontier LLMs Attempt to Persuade into Harmful Topics

Large language models (LLMs) are already more persuasive than humans in many domains. While this power can be used for good, like helping people quit smoking, it also presents significant risks, such as large-scale political manipulation, disinformation, or terrorism recruitment. But how easy is it to get frontier models to persuade into harmful beliefs or illegal actions? Really easy – just ask them.

AI Accelerates Exploits, Forces New Breach Playbooks

The game-changing capabilities of AI models like Anthropic's Claude Mythos have drastically shrunk the exploit window, allowing them to uncover vulnerabilities in minutes that would take human experts weeks or even hours to detect. This seismic shift is forcing organizations to rethink their approach to…

#AiAcceleratedExploits #LargeLanguageModels #VulnerabilityManagement #IncidentResponse #EmergingThreats

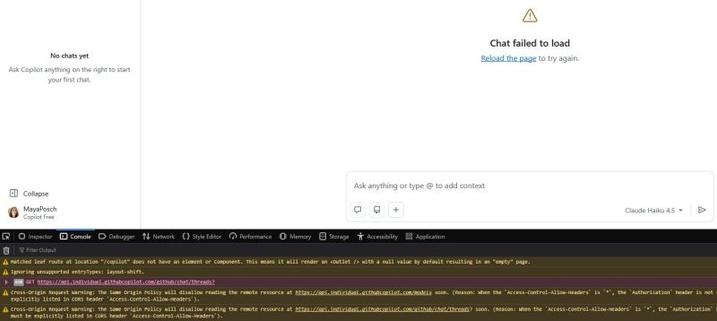

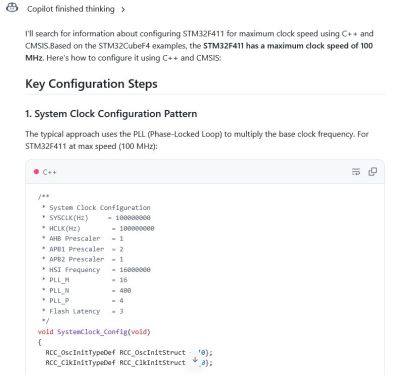

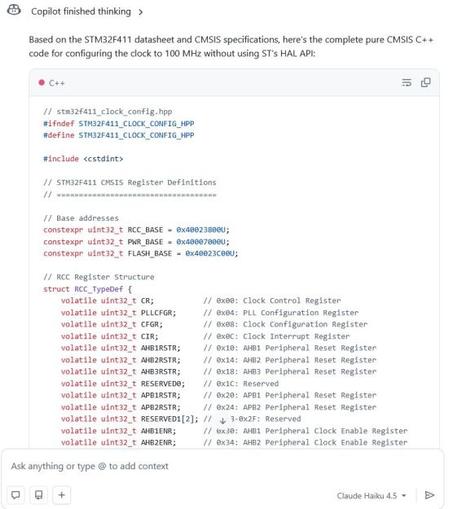

Trying Pair Programming With an LLM Chatbot

https://fed.brid.gy/r/https://hackaday.com/2026/04/27/trying-pair-programming-with-an-llm-chatbot/

#ArtificialIntelligence #CurrentEvents #Featured #SoftwareDevelopment #AIassistant #Chatbot #Largelanguagemodels

Given how #LLMs work, it would make sense to treat LLMs the same way as other stochastic way of divination and consult demons or oracles, like tarot cards, throwing bones, tea leafs, etc…

It's been a long while since the bible had it's last expansion pack, but I'm sure the next expansion pack will at least contain a prohibition on making and consulting LLMs

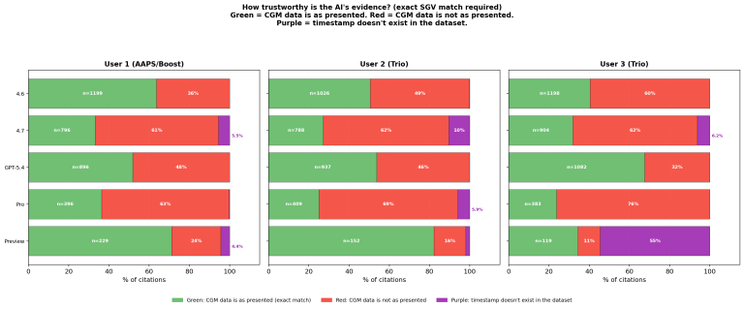

Five AI models, three users, one finding: the settings came from the textbook, not the data

We took Nightscout data from three people and gave it to 5 Large Language Models with a detailed prompt to see how they would respond.

The results weren't what I was expecting.

https://www.diabettech.com/five-ai-models-three-users-one-finding-the-settings-came-from-the-textbook-not-the-data/

#AI #Diabetes #PumpSettings #AI #AIDSettings #ArtificialIntelligence #LargeLanguageModels