https://winbuzzer.com/2026/04/17/apple-google-steered-users-to-nudify-apps-report-xcxwbn/

Report: Apple and Google Steered Users to Nudify Apps

#AI #AppStores #Apple #Google #NudifyApps #AppleAppStore #GooglePlay #BigTech #AIImageGeneration #AIgeneratedContent #Deepfakes #AIEthics #OnlineSafety

RE: https://flipboard.social/@TechDesk/116002835028405163

Would you believe the man behind this electronic CSAM machine was also so fucking keen to go to an Epstein rape party?

Yes, of course you would, fucking 'Pedo Guy' that he is! 😡

#ElonMusk #GrokAI #Musk #Twitter #CSAM #NonConPorn #NudifyApps #Nonce #EpsteinFiles #Epstein #JeffreyEpstein

Cook and Pichai are getting thumped hard over failure to boot Grok off App stores.

Apple and Google both explicitly ban apps containing CSAM, which is illegal to host and distribute in many countries, and yet Grok is still available on app stores. Grok also is violating X’s own policies, which prohibit sharing illegal content. The EU will likely begin a formal investigation of violations of the EU’s Digital Services Act.

Cook and Pichai have been called cowards in public media posts.

https://www.wired.com/story/x-grok-app-store-nudify-csam-apple-google-content-moderation/

#CSAM #Grok #Apple #Google #AppStore #PlayStore #Cook #Pichai #nudifyapps #Illegal #Internet #MobileApps #xAI

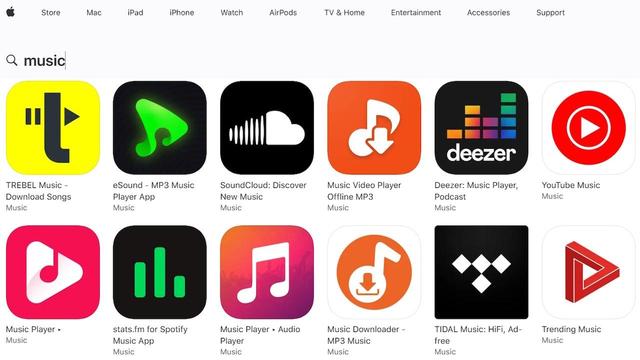

Non-consensual sexualization tools (#NSTs) are apps and websites that generate sexualized images of real people, whether they have given consent or not. Often called #NudifyApps, they don’t only create full nudity but can also include non-consensual “undressing” via images in underwear or swimsuits.

Support us in holding platforms accountable! If you see apps, websites, or accounts that create or spread sexualized deep fakes, report them to us: https://algorithmwatch.org/en/stop-nudifying-deepfakes/

https://algorithmwatch.org/en/stop-nudifying-deepfakes/