Are UK universities ready to cope with generative AI in the 25/26 academic year?

In a month we’ll enter the second full academic year in which large language models (LLMs) have been a routine feature of staff and student practice within universities. While their uptake was originally driven by a sense of novelty, there’s increasing evidence LLMs are now an ingrained feature of life for a growing user base. OpenAI claim ChatGPT is at 700 million active weekly users. There were 1.7 billion downloads of GenAI apps in the first half of 2025. There’s a clear trend of users spending more time on the app, including at the weekends. It’s therefore unsurprising that HEPI found 92% of undergraduate students (n=1041) using generative AI in some form, with significant growth from the 2024 survey:

This includes 88% using it in assessments in some form:

As someone who ran a large PGT programme at a Russell Group for the last few years, I suspect the numbers are higher still for international PGTs, particularly if we include translation software in the category of ‘generative AI’. Interestingly they found a “digital divide based on socio-economic grade” in which “Some functions are used much more by students from higher socio-economic groups (A, B and C1), including summarising articles, structuring thoughts and using AI edited text in assessments”

This means that universities need to treat generative AI as something that has happened. Not something that is happening or will happen. It’s not a change to prepare for or a tide we can hold back but rather a feature of our organisations that we need to understand and steer in constructive rather than destructive directions. My perception is that a surprisingly large number of academics are still locked into this sense that we’re in the early stages of a change, rather than coping with a shift that has already happened. We saw from yesterday’s deeply incremental update to GPT 5 how significant growth in capacities of the frontier models are plateauing. The innovation we’ll see in the next couple of years will be at the level of software design and affordances enabled by engineering optimisation rather than a fundamental leap in what models can do.

The first wave of innovation has happened and it’s time for universities to get to grips with it. This isn’t just recognising what models can do, which has largely been recognised at this stage. But also how widely these models are being used to do such things across the university system. These are mainstream tools which are being used by an overwhelming majority of students and I suspect a (small) majority of academic staff. There’s an urgent need to grapple with the implications of this in a practical mode rather than speculatively arguing about what this all means for teaching, learning and research.

When it comes to student use of LLMs this means shifting from questions of whether students are using models to why and how they are using them. It’s only if we’re dealing with specific uses in real world contexts that we can have meaningful debates about what is acceptable practice. The HEPI research indicates significant uncertainty amongst students about what is acceptable, as evidenced by the fact none of these activities receive more than 2/3 endorsement as acceptable:

Consider Anthropic’s research into how university students are using Claude which shows significant variation between higher-order and lower-order skills. My concern at the moment is that the diffuse nature of AI policy in universities (i.e. general principles which lack scaffolding to support practical reasoning on the ground) mean we often talk about ‘AI use’ as if these activities are interchangeable:

https://markcarrigan.net/wp-content/uploads/2025/05/image-9.png

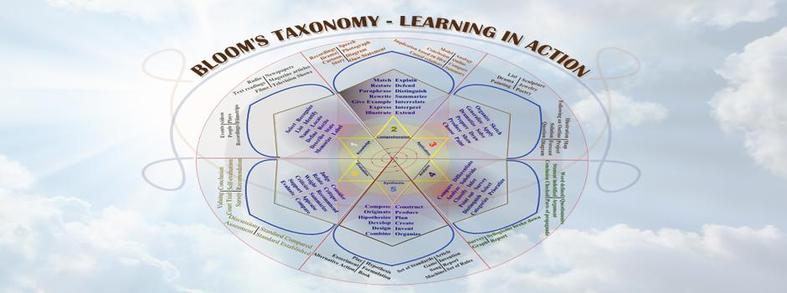

Clearly they are not. I struggle to think of any circumstances in which using LLMs to ‘explain concepts’ is problematic (assuming a baseline level of capacity to use the model) whereas pretty much all circumstances I can imagine for ‘use in assessment without editing’ seem problematic to me. Many academics seem to imagine most, if not all, use of LLMs falls into the latter categories which means conversations with students will lack recognition of the varied ways in which students are using models. We have a language for talking about these issues which is ready to hand:

- Bloom’s Taxonomy: creating, evaluating, analyzing, applying, understanding, remembering.

- Well-documented examples of student LLM use: explaining concepts, summarising a relevant article, suggesting research ideas, structuring thoughts, use in assessment after editing, use in assessment without editing.

We urgently need to talk with students in specific terms about educational LLM practices which can be understood in the established categories of Bloom‘s Taxonomy. These conversations need to recognise the diversity of motivations which students have for using LLMs, illustrated here by the HEPI research again:

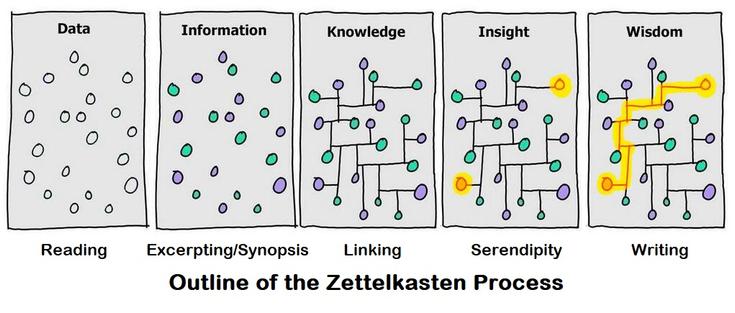

This is what I mean by a focus on what students are doing with LLMs (educational LLM practices) and why they are doing it (student motivations for LLM practices). The conceptual architecture of Bloom’s Taxonomy then helps us understand the implications of these practices for teaching and learning, in ways informed by basic AI literacy e.g. we should advise students to avoid using LLMs for remembering, not because it’s inherently wrong to do so but because models aren’t databases for factual recall. In this sense I’m suggesting a number of elements in an adequate response:

- Direct conversations with students in terms of educational LLM practices and student motivations for LLMs practices [there’s a huge problem here of creating environments in which students feel comfortable sharing all aspects of their practice]

- Equipping teaching staff to better understand the range of educational LLM practices and student motivations for LLMs practices

- Reporting on the perception of teaching teams about educational LLM practices and student motivations for LLMs practices

- Conversation at the level of teaching teams, departments and schools about the implications of these practices for teaching and learning

This picture is evolving too rapidly for the established repertoires of educational research. But equally we don’t need a perfect record as much as a working understanding. If this is enacted dialogically, creating spaces for students and staff to have these conversations, it contributes in itself to a more reflective culture around LLM-use in universities which overcomes some of these debates. Ideally this takes place at the level of teaching teams or departments for two reasons:

Until we can create these spaces for reflective dialogue I don’t think universities are prepared for the 25/26 academic year. At present we have a chaotic landscape of individual practice without clear norms uniting staff and students, while policy making has a reactive character in spite of attempts to articulate principles and practices. I wrote two years ago that universities were organised in a way that left a gap between policy and practice which would be fatal with LLMs:

In siloed and centralised universities there is a recurrent problem of a distance from practice, where policies are formulated and procedures developed with too little awareness of on the ground realities. When the use cases of generative AI and the problems it generates are being discovered on a daily basis, we urgently need mechanisms to identify and filter these issues from across the university in order to respond in a way which escapes the established time horizons of the teaching and learning bureaucracy.

In practice this means that individuals and teams confront pedagogical situations in which there’s a lack of clarity about what university strategy and rules mean in practice. Here, now, with these students in this room: what should I be doing? My suggestion is that addressing this problem needs mechanisms to:

Until we build this missing link I think “institutional responses will actually amplify the problems by communicating expectations that are incongruous with a rapidly evolving situation”, as I put it two years ago. The challenge is how to build this link in a way that is consistent with intensified workloads and an increasingly generalised crisis within the sector. This means it has to be lightweight enough to work within existing structures, specific enough to address real practices rather than abstract principles, and iterative enough to evolve as rapidly as the situation on the ground. It also has to enable knowledge and perspectives to be shared across disciplines while retaining their disciplinary specificity. It also probably needs to be asynchronous to a large extent, up to the point where it doesn’t hurt the quality of the dialogue.

#BloomSTaxonomy #ChatGPT #education #generativeAI #higherEducation #learning #LLMs #pedagogy #strategy