📢 Call for Contributions: 1st Workshop on Sustainable Practices for Reproducibility in HPC (REPRO-HPC)

📅 When? June 26, 2026, 9am - 1pm (co-located with ISC HPC 2026)

📍 Where? Hamburg, Germany

🌐 Website: http://repro-hpc.github.io

Reproducibility in HPC is complex software, cutting-edge hardware, and high costs. This makes it a challenge to produce robust scientific results which can be reproduced. This workshop is about bringing the community together to share tools, best practices, and feedback to tackle these issues.

📅 Important Dates:

Abstract Submission: April 17, 2026 (AoE)

Author Notification: May 8, 2026 (AoE)

Workshop Date: June 26, 2026, 9am - 1pm

🔍 Topics We’re Interested In:

General: Lessons learned, energy-efficient reproducibility, long-term reproducibility, teaching HPC reproducibility.

Software/Workflow: Tools for portable experiments, CI/CD, provenance, and FAIR principles.

Platforms: Services HPC centers/testbeds can offer to support reproducibility.

Artifact Evaluation: New processes, incentives, community standards, and evaluating proprietary software/hardware.

📝 Submissions:

2-page abstract or 4-page short paper (PDF, IEEE double-column template).

Submission: https://easychair.org/conferences/?conf=reprohpc26

More details: https://repro-hpc.github.io/#call-for-contributions

🎤 Keynote Speakers:

Kate Keahey (University of Chicago, USA – Chameleon Cloud)

Helena Vela Beltran (Do IT Now, Spain – EESSI)

👥 Organizers:

Quentin Guilloteau (INRIA, France)

Valérie Hayot-Sasson (ÉTS Montréal, Canada)

Dennis Hoppe (HLRS, Germany)

Josef Weidendorfer (LRZ, Germany)

💡 Interested? Submit your work and join the conversation! Let’s make HPC reproducibility sustainable together

#reproducibility #HPC #FAIR #artifact #artifactevaluation #workflow

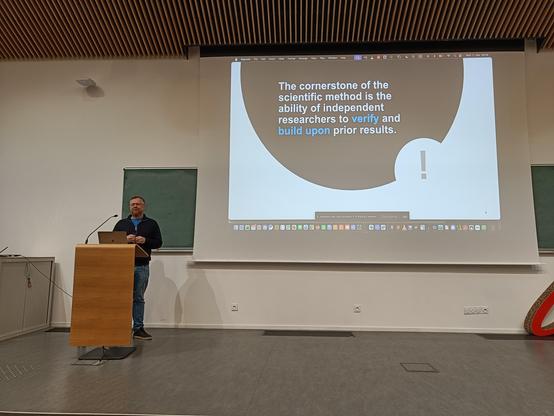

I just finished another artifact evaluation of a major conference of my field, and here is my recurring rant 😬

I am still surprised by some practices (or lack of) for packaging experiments.

It always seems like authors do some experiments for the paper, and when the paper get accepted, they create the artifact at that time (e.g., creating scripts, managing the software dependencies).

It has a “last minute” feeling.

Like, “let's get the paper accepted, and we'll care about reproducibility later”.

But that's always difficult to verify (authors usually create a brand-new git repo for the artifact evaluation, so no history...)

To be fair, the conferences give some guidelines to the authors for how to package their experiments, and I would not say I agree with all of them.

Their main argument seems to be the tradeoff between the usability of the artifact (by the authors and the reviewers) and its reproducibility.

In 2024, we presented our feelings [1] [2] at a workshop about reproducibility in HPC [3].

And I think they have not changed, and I still have the same questions about the whole artifact evaluation process...

Here are some of them:

- What is/should be the role of artifact reviewers ? evaluate/judge/review or debug ?

- Is the fast pace of conferences really adapted for a thorough evaluation ?

- Who really benefits from artifact evaluation ? the authors for promoting their paper with badges ? the future researchers aiming to build upon the artifact of the authors ?

- When will / should the "encourage the authors to submit their artifact" end ? and does the community need to move towards stricter evaluation ?

- Is the granularity of the badge system enough to reflect the reproducibility of an artifact ?

I understand that evaluating the reproducibility of complex experiments is challenging, but are we really doing enough to do it the best we can ?

I am really starting to think that “reproducibility” might just be a big word used to not have to say “scientific/experimental methodology”....

TL;DR: I'm confused...

[1] hal.science/hal-04764265/file/proposal.pdf

[2] https://hal.science/hal-04764265/file/slides_guilloteau_ae_authors_reviewers_lessons_questions_frustrations.pdf

[3] https://reproduciblehpc.org/

https://benhermann.eu/talks/fse20-expectations-se23.pdf