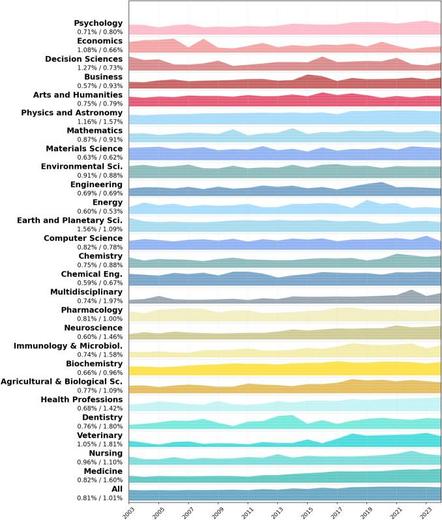

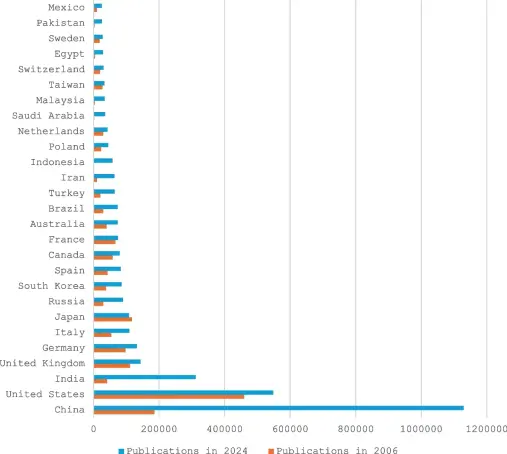

Today I gave a talk on @OpenAlex — the largest open database of scholarly metadata. #OpenAlex offers a broader and more inclusive view of science, making previously “invisible” research more visible.

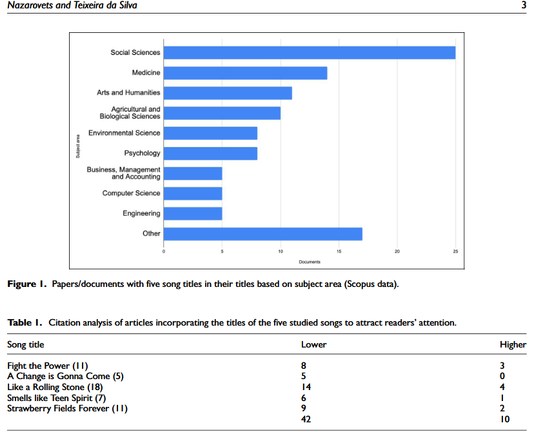

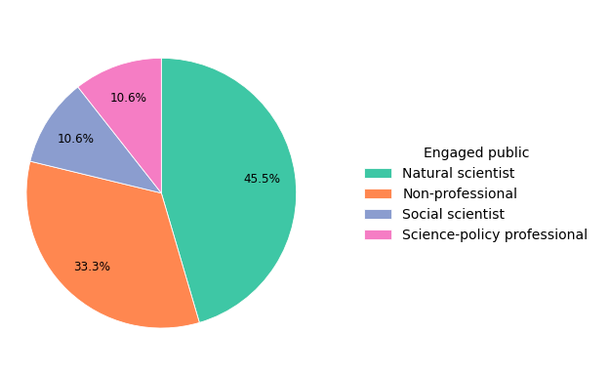

At the same time, it raises important questions about metadata quality, data completeness, and how we interpret metrics.

👉 https://doi.org/10.13140/RG.2.2.31061.79847

A key takeaway: research evaluation can vary significantly depending on the data source and analytical choices.