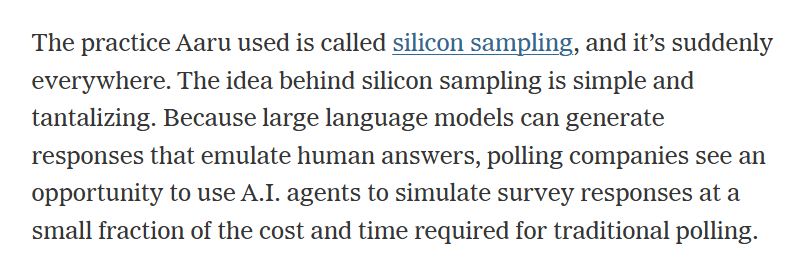

@hannah obviously the model needs to be trained on a sample of typical responses from the specific population(s) to be surveyed.

... so, thats it, AI something something magically gets more precision out of the same dataset, by adding simulated data? They're saying the simulated responses are more accurate than the real responses???

@hannah Wait, I've got a better idea that will still cut their polling costs in half: we survey people but with half the standard sample size, then we run it all through a photo copier and tabulate the results. We'll get the normal sample size and accuracy!

Also, copy machines use a lot less power than AI, its win-win!

asking google whether the president is good or bad 20 times and reporting the answer as the approval numbers

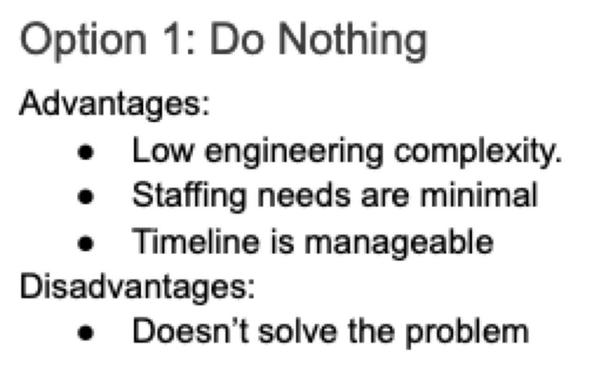

This could revolutionize statistical analyses.

You can greatly increase the sample size at almost no cost!

Reducing size of confidence intervals!

Clearly (48.136, 48.138)% is from a far superior study to one that reports (45, 51)%.

@hannah 🤦♂️ They can’t possibly be that dumb can they?

(Yes I know)

Me: Screams into the void

Void: Due to increased volumes the void can't answer your scream at the moment. Your scream is very important to us, please hold, there are only 31,415,926 people ahead of you in the scream queue.

@hannah I feel like this practice is a fundamental misunderstanding of its own problem space.

This doesn't come within three or four degrees of freedom of measuring what the advocates seem to think it measures.

10/10 LLMs think that this is a tremendous idea.

I have a hard time getting over this.

I tried irony. Sarcasm. I duckduckgoed the word.

Maybe the article misunderstood something? No, doesn't look that way.

Sure, there is bias in any sort of polling or study. The way you phrase things can influence how they are answered.

But this is just a dice roll with more words.

No wait, dice rolls can't be manipulated that easily to skew opinion.

@hannah I have this same instinctive response every time I've seen reference to "synthetic data." People have been (successfully!) selling this crap for almost a decade!

There is no way to synthesize data that meaningfully replicates a real world investigation. Simulate volume? Sure. Test specific scenarios or fuzz inputs? Yep, I'll buy that. But there is no expositive value in synthetic data - by definition. It's synthetic! It reflects only the algorithms used to synthesize it! 🤦

"we don't need to get actual data to get an accurate average. we'll just average up all the already averaged data. it will be just as good."

narrator: "someone flunked their stats 101 course..."

@hannah qualtrics floated this (and maybe went through with it idk) right after chatgpt first went live

What hilarious is that this agentic things is plausibly *worse* since you're having simulations fill out the survey instead instead of using an llm to generate a bazillion responses. The responses will still be just as bad, but at a huge markup of energy costs

nothing is real

@hannah Perhaps I'm just missing something obvious here, but...isn't the whole point of conducting surveys to gather data from people? If the only entities being surveyed are A.I. agents that mimic human speech and which could be programmed to say anything, how is it meaningful?

It seems as meaningful to me as "Our survey data says 95% of people approve our product. We surveyed 1000 people whom we paid $100 each to say positive things about us."

Efficient! What could possibly go wrong with that?!

Reminds me of Asimov's short story Franchise. I wonder where these companies get their innovative ideas from?

@hannah Lol, I often quote the brilliant idea that using these generative machines is like using a forklift to lift weights at the gym. But here they are actually doing it :D

”We discovered a way to save 100% of the sciences and research costs: we will simply not do any of it!”

@hannah Same on all counts...

Mechanical Turk taken to its irrational extreme.

The data is clear: eight out of ten doctors* want you to eat small rocks and put glue on your pizza

*: We asked ten LLMs to pretend to be doctors

🍀

🍀