@baldur @wikiyu Color me really surprised; paragon and derivatives (at least for German) were definitely worse; I remember the (2008-ish?) surprise when ANN-based translations started to achieve higher rankings than purely Bayes-based/statistical methods.

(Oh and I do personally remember things like the Babylon spyware thing, which wasn't really good. IBM Watson didn't work as well as Google translate when that came out, for German<->English at least. I had played with Aperium in its earlier …

@funkylab @wikiyu So, around the time the LLM bubble first began, there was a noticeable sharp decline in the performance of publicly available translation services (i.e. Google Translate and the like) when it came to translating most Nordic languages and it's generally gotten worse, not better, over time. It's become a running joke.

An important note here is that there is much much less text available for these languages in machine-readable form than even German or French.

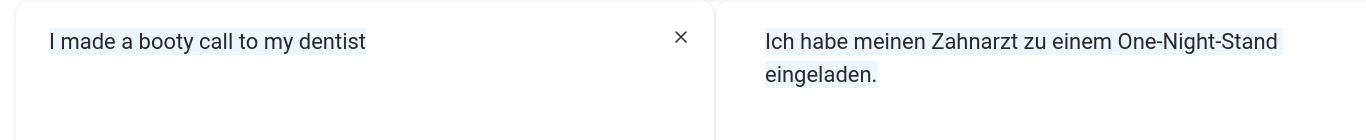

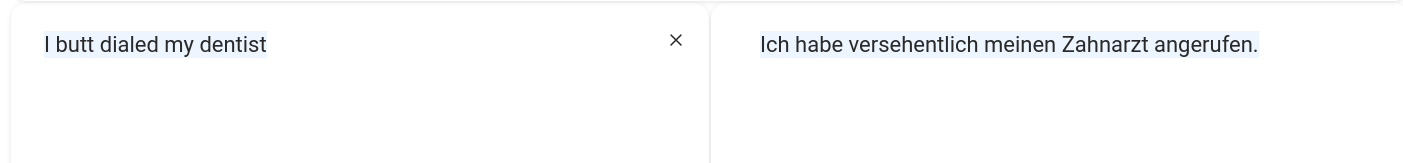

@UkeleleEric @baldur @wikiyu don't know whether that's a good example, because the difference is clear even devoid of context, PLUS existing LLMs have no problem with that difference at all. (The two phrases are only similar to the human reader. You're projecting things that are easy to make mistakes on for humans to machine translation! (see attached Deep-L)

I'm also not sure rule based & Bayesian translation makes a lot of difference when it comes to sarcasm. That's sentiment detection!

@abucci @wikiyu @baldur I feel like we're arguing based on perceptions here – I certainly am, and can but vaguely remember the press echo when neural (not LLM) translators came out. So, I might need to shut up here and say: Have not enough data to base my claims here. Do you?

Do we have any qualitative analysis in literature that I could read? So far we've got four people claiming things, that's not a great discussion :)

Furthermore, it is a significant mischaracterization of my post to say I was claiming "older translators are better". This is not what I said; nor is it an implication of what I said. I stated that LLM-based translators have shortcomings. None of the shortcomings I pointed out or gestured towards are particularly controversial, and have been written about many times. Making such statements is a basic part of any engineering practice: in order to select the right tools for a particular job, one has to take an honest look at the tradeoffs involved.

I assume you mean well also, and so I will share with you that to my ear both your responses sounded like they were written in bad faith. If that was not your intention, be aware that your actual intentions are not coming through when you post like this, at least not to me.

@[email protected] @[email protected]

@qgustavor @wikiyu That's what I'd argue, too, but: this very basic theory and reality, especially of really available implementations, might diverge there.

Thing is that @baldur is actually someone from the field, so his word does weigh heavy to me, even if it doesn't reflect my own experience with translation quality.

(EDIT: way->weigh. Human in-mind translations are not perfect, either :D)

So, AFAICT and as best I know, in general LLMs are sensitive to the size of the training data set. Only a few languages have a collection of machine-readable texts big enough for these models

IIRC they used to compensate for this in the pre-LLM days specifically for each language.

Once everybody began to migrate to approaches that require large data sets, performance for all of those tasks (translation, summary, correction) in smaller languages especially began to suffer

Though, it should be noted that in a lot of third party, neutral testing, specialised models outperform LLMs for many language tasks such as summarisation, even in English. At least in the same ballpark, even if they underperform, while costing orders of magnitudes less

By the way, as I said, I hadn't been using dedicated tools since ~2010, and then only when language model (not necessary what is usually qualified as "L"LM) translation became "commoditized", esp. when it became available as addon to Firefox.

If that level of translation was possible before at similar or lower effort, I'm kind of disappointed with the world with not shipping it with browsers earlier; I maintain the opinion that translation …

A common theme for me over the last few months is people using this stuff for document ingestion, indexing, and basic NLP/manipulation, then discovering that the output is bullshit. There are good, validated approaches for doing this stuff that are much cheaper and more deterministic in their behaviour. It's honestly very weird.

@baldur A friend told me he uses it to do tasks that are easy to validate, like renaming variables. I was like... did you previously not have ways to rename variables? Is this not something that you've done a million times before?

He mumbled some excuses about how he couldn't get some LSP server to work or something. This man has close to 20 years of professional experience, and chatbots have absolutely ruined his brain.

@AndrewRadev I have a bit more sympathy for this type of "instructional" use case. Depending on your language and editor, it can be a right pain to get tooling to work well. I still struggle with Neovim config and getting things working!

I wanted to move an Elixir module yesterday and thought I'd try it with Gemini to save me some drudge work. It worked, found references I probably would have missed on first go. Though not sure it saved me any time in the end with all the "thinking" required.

As much as I dislike these things, it would be non-factual to deny they have some utility.

@AndrewRadev I was comparing its utility to the work involved in learning or choosing an editor/IDE and having it perform the same task... self-evidently not comparing it against doing "nothing" or a compiler..! And how from the perspective of a user/developer, there is benefit there. And sadly, it is reliable for many such tasks.

I do not need to be lectured about the numerous clear externalised costs.

@Odaeus You mention moving a module in Elixir. I don't have professional experience with Elixir. In my last job, I wrote React with typescript.

I always had `tsc --watch` running in a terminal window. When I needed to move some code, refactor components, etc, I would make the change, then follow the tsc errors one by one. This was a fairly straightforward and 100% reliable process. Tsc had its issues, but it was deterministic.

This is why I compare an LLM "move this module" task to a compiler. I guess maybe you can ask the LLM to move the module and also run the compiler? But in either case, you won't miss anything. Maybe it'll fail and you'll have to spend the same time fixing issues than you would have doing things the direct way. Maybe it will succeed, but it'll introduce unrelated changes that compile, but introduce issues. I don't see how you could ever know what you're going to get and why this would be a desirable workflow for a professional software developer. I would always prefer a consistent, reliable workflow, rather than a roll of the dice.

The METR study had people estimate they were faster by 20% using AI, but they were 19% slower. Their estimations were completely off, because it's impossible to reliably know whether you would have, in fact, missed that one reference. I agree that there is *perceived* utility compared to the alternative. I agree there *might* be real utility *sometimes*. I don't believe that most developers have actually designed experiments where they've measured whether it's beneficial for them on average, or not.

@Dangerous_beans @baldur yo grammar checkers aint got nothing on me:-)

video: syntax - pride (music) - word play

https://www.youtube.com/watch?v=HkpXGMPwf2c

Pride - Syntax

@baldur Oh, definitely not. The old checkers would make mistakes and miss stuff, but they didn't just make shit up. At work we've started using a non-AI Word plugin called PerfectIt that can actually check against Chicago Manual of Style rules, and it's useful, but it has its limits. I don't expect to be out of a job any time too soon.

eta: We've also started playing with an AI-based system to provide alt-text for remediating backlist ebooks. It's very hit or miss. It kind of highlights that the main limitation of any automated system is failure to understand context.

@baldur From my own experience working with the classic spelling and grammar checkers (I open-sourced it even, subtitle-linter on my GitHub) you are overselling them.

Once I even got into an internet fight because I said spelling checkers should reject rare words, as, in my experience, most instances of rare words in the texts I reviewed were typos — those were Portuguese texts, but if I were to give an English example it would be like finding lots of "fain" as typos for "pain" — in Portuguese there were LOTS of "maça" (mace) for "maçã" (apple).

But, to be fair, even LLMs have issues with this due to how they are designed. On the other hand, it's more likely a LLM would recognise mace if it's being held by a king, but wrong if someone is eating it. And all of this is just spelling!