DeepSeek tests “sparse attention” to slash AI processing costs

Chinese lab’s v3.2 release explores a technique that could make running AI far less costly.

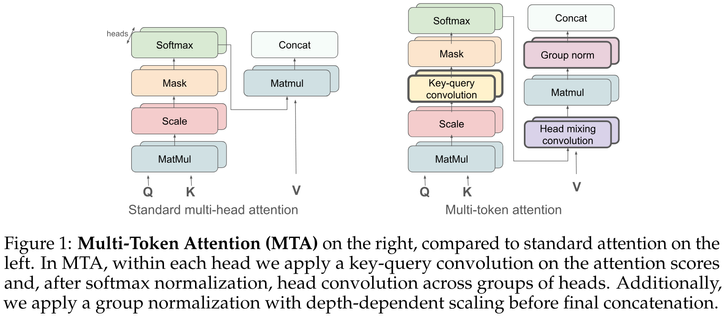

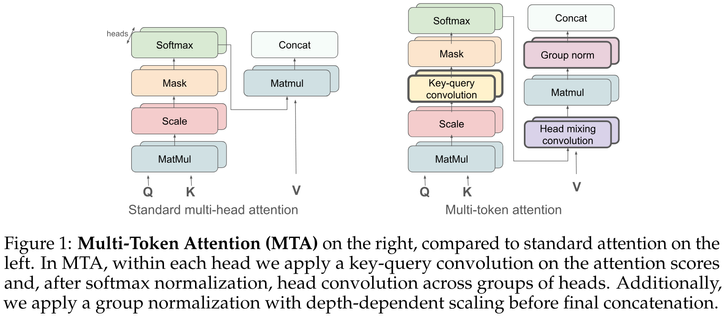

Multi-Token Attention

Soft attention is a critical mechanism powering LLMs to locate relevant parts within a given context. However, individual attention weights are determined by the similarity of only a single query and key token vector. This "single token attention" bottlenecks the amount of information used in distinguishing a relevant part from the rest of the context. To address this issue, we propose a new attention method, Multi-Token Attention (MTA), which allows LLMs to condition their attention weights on multiple query and key vectors simultaneously. This is achieved by applying convolution operations over queries, keys and heads, allowing nearby queries and keys to affect each other's attention weights for more precise attention. As a result, our method can locate relevant context using richer, more nuanced information that can exceed a single vector's capacity. Through extensive evaluations, we demonstrate that MTA achieves enhanced performance on a range of popular benchmarks. Notably, it outperforms Transformer baseline models on standard language modeling tasks, and on tasks that require searching for information within long contexts, where our method's ability to leverage richer information proves particularly beneficial.

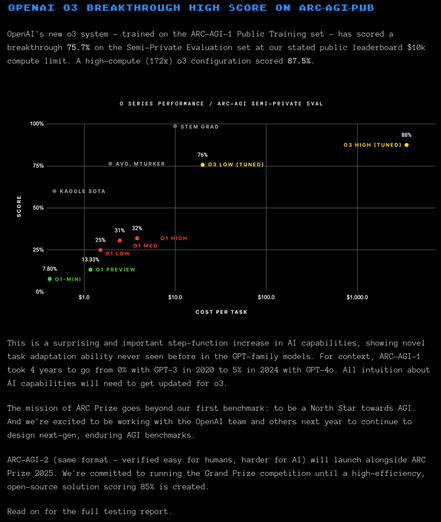

[thread] OpenAI o1, o3 | OpenAI GPT-4o

https://en.wikipedia.org/wiki/OpenAI_o1

* generative pre-trained transformer

* form. known within OpenAI as “Q*"

* o1 spends time "thinking" before it answers

* makes it better at complex reasoning tasks, science & programming than OpenAI GPT-4o

* full v. was released 2024-Dec-05

#LLM #OpenAI #OpenAI_o1 #OpenAI_o3 #GPT4o #ML #TransformerArchitecture #reasoning #COT #ChainOfThought #AGI #AI

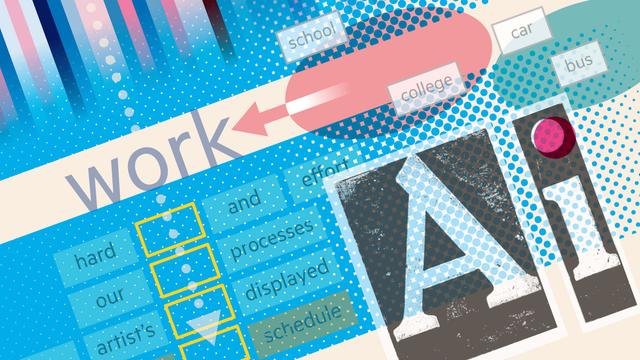

Transformer Explainer: Interactive Learning of Text-Generative Models

https://arxiv.org/abs/2408.04619v1 (neat visualization)

#AI #transformerArchitecture Transformer Explainer: Interac...

Transformer Explainer: Interactive Learning of Text-Generative Models

Transformers have revolutionized machine learning, yet their inner workings remain opaque to many. We present Transformer Explainer, an interactive visualization tool designed for non-experts to learn about Transformers through the GPT-2 model. Our tool helps users understand complex Transformer concepts by integrating a model overview and enabling smooth transitions across abstraction levels of mathematical operations and model structures. It runs a live GPT-2 instance locally in the user's browser, empowering users to experiment with their own input and observe in real-time how the internal components and parameters of the Transformer work together to predict the next tokens. Our tool requires no installation or special hardware, broadening the public's education access to modern generative AI techniques. Our open-sourced tool is available at https://poloclub.github.io/transformer-explainer/. A video demo is available at https://youtu.be/ECR4oAwocjs.

Transformer Explainer: Interactive Learning of Text-Generative Models

https://arxiv.org/abs/2408.04619v1 (neat visualization)

#AI #transformerArchitecture

Transformer Explainer: Interactive Learning of Text-Generative Models

Transformers have revolutionized machine learning, yet their inner workings remain opaque to many. We present Transformer Explainer, an interactive visualization tool designed for non-experts to learn about Transformers through the GPT-2 model. Our tool helps users understand complex Transformer concepts by integrating a model overview and enabling smooth transitions across abstraction levels of mathematical operations and model structures. It runs a live GPT-2 instance locally in the user's browser, empowering users to experiment with their own input and observe in real-time how the internal components and parameters of the Transformer work together to predict the next tokens. Our tool requires no installation or special hardware, broadening the public's education access to modern generative AI techniques. Our open-sourced tool is available at https://poloclub.github.io/transformer-explainer/. A video demo is available at https://youtu.be/ECR4oAwocjs.

Generative AI exists because of the transformer (free for non-subscribers) - Financial Times

https://ig.ft.com/generative-ai/ (useful visual explanation)

#AI #TransformerArchitecture

Generative AI exists because of the transformer

The technology has resulted in a host of cutting-edge AI applications — but its real power lies beyond text generation