There's a whole bunch of recent #ThingUmbrella updates which I still have to write about, but one of the things is the reworked, improved and more customizable optical flow (aka https://thi.ng/pixel-flow package). The visualization in this test video is showing it in action via color-coded overlaid flow field vectors (once again worst-case scenario for video compression, let's see how it comes out [or not...])

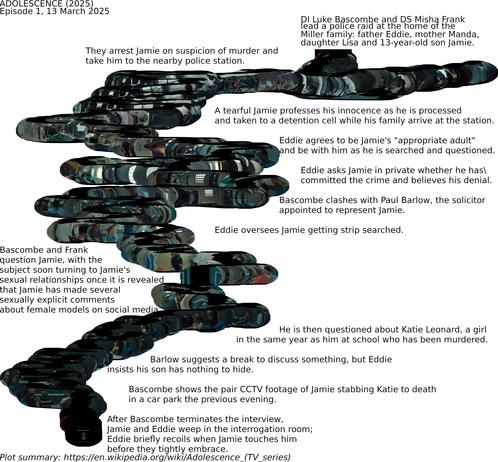

An optical flow composite of episode 1 of "#Adolesecence" (2025, Netflix). The plot summary, from Wikipedia, follows the composite image.

Optical flow estimates the movement of the viewer, or of the subject (in the opposite direction), plotting the narrative imagery along this narrative flow line. The bubble-like flow imagines the embodied sense of movement of engaging with the drama.

To avoid a massive OpenCV dependency for a current project I'm involved in, I ended up porting my own homemade, naive optical flow code from 2008 and just released it as a new package. Originally this was written for a gestural UI system for Nokia retail stores (prior to the Microsoft takeover), the package readme contains another short video showing the flow field being utilized to rotate a 3D cube:

I've also created a small new example project for testing with either webcam or videos:

https://demo.thi.ng/umbrella/optical-flow/

#ThingUmbrella #OpticalFlow #ImageAnalysis #ComputerVision #TypeScript #JavaScript

From my old Vimeo archive (2008): #OpticalFlow #3D #InteractionDesign prototype for Nokia retail stores. No OpenCV, just vanilla pixel buffer analysis (in Java):

"Documenting progress for a current project, using optical flow analysis to enable a gestural interface & navigate on-screen content both 2D and 3D.

The video analysis requires only a very small (here 160 x 120) capture size and is fairly tolerant to noise. Each frame is recursively analysed in blocks of 30x30 pixels (variable) which are displaced by a certain amount (also adaptable) and then checked for matches in the previous frame. The resulting flow field is displayed and summed to compute the average direction and amount of movement. All calculations and updates to the flow field use threshold & low pass filters to reduce jitter."

A #sensory re-interpretation of the Copacabana entrance scene from Goodfellas (1990) https://www.youtube.com/watch?v=_rX_vDVdmYA

Using #OpticalFlow estimation, I composited every frame in relation to camera movement from the previous frame, generating an escape map through the subterranean entrance and kitchens. The scene starts (with the car wheel) at bottom centre, and ends with Henry and Karen seated at top right.

Goodfellas continuous tracking shot: https://www.bbc.com/culture/article/20200918-goodfellas-at-30-the-making-of-one-of-films-greatest-shots

Goodfellas | 1990 - Full Steadicam Tracking Shot 60fps 1080p HD

Also in the box were, 5 optical flow sensors.... ok, was toying with an idea for a robot to track it's movement with the flow sensor. Never got it too work right. So I may have bought a few of these at one time for that project... but Now I don't know what I'd do with them.

Ce soir, c'était lecture de papiers d'#ECCV2022 et en particulier ce papier de tracking : "Particle Video Revisited: Tracking Through Occlusions Using Point Trajectories"

Encodeur, corrélation de features, sinusoidal encoding et transformer-like MLP : un joyeux mélange.

https://particle-video-revisited.github.io/

Par Adam W. Harley & team

Autonomous Drone Dodges Obstacles Without GPS

If you're [Nick Rehm], you want a drone that can plan its own routes even at low altitudes with unplanned obstacles blocking its way. (Video, embedded below.) And or course, you build it from scratch.

Why? Getting a drone that can fly a path and even return home when the battery is low, signal is lost, or on command, is simple enough. Just go to your favorite retailer, search "gps drone" and you can get away for a shockingly low dollar amount. This is possible GPS receivers have become cheap, small, light, and power efficient. While all of these can fly a predetermined path, they usually do so by flying over any obstacles rather than around.

[Nick Rehm] has envisioned a quadcopter that can do all of the things a GPS-enabled drone can do, without the use of a GPS receiver. [Nick] makes this possible by using the algorithms similar to those used by Google Maps, with data coming from a typical IMU, a camera for Computer Vision, LIDAR for altitude, and an Intel RealSense camera for detection of position and movement. A Raspberry Pi 4 running Robot Operating System runs the autonomous show, and a Teensy takes care of flight control duties.

What we really enjoy about [Nick]'s video is his clear presentation of complex technologies, and a great sense of humor about a project that has consumed untold amounts of time, patience, and duct tape.

We can't help but wonder if DARPA will allow [Nick] to fly his drone in the Subterranean Challenge such as the one hosted in an unfinished nuclear power plant in 2020.

#dronehacks #autonomousaircraft #autonomousdrone #gps #intelrealsense #lidar #opencv #opticalflow