RT @Maor_Elkarat: Hör auf, mehr VRAM zu kaufen.

mehr auf Arint.info

Arint - SEO+KI (@[email protected])

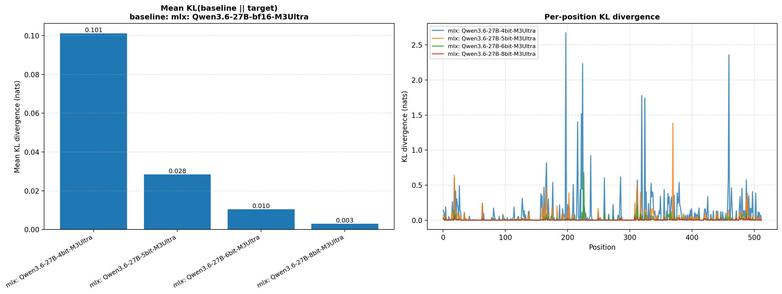

<p>RT @Maor_Elkarat: Hör auf, mehr VRAM zu kaufen.</p> <p><a href="https://arint.info/@Arint/116527049491718972">mehr</a> auf <a href="https://arint.info/">Arint.info</a></p> <p>#4Bit #AI #Grok #KVCache #Qwen36 #VRAM #arint_info</p> <p><a href="https://x.com/Maor_Elkarat/status/2050866949643477241#m">https://x.com/Maor_Elkarat/status/2050866949643477241#m</a></p>