#AiCrawler

#AiCrawler

- je vais en autre héberger un site public. Comment je fais pour éviter les scrapers IA ? Est ce qu'il y a un truc à faire avec NGINX ou fail2ban ? Au moins pour bloquer le gros du soucis quoi :((

- j'ai un SSD où j'ai installé yunohost et j'ai installé un disque dur. Pour l'instant y'a rien dessus, y'a une partition vide en ext4. J'ai vu ce tuto qui est très clair mais j'aimerai des précisions sur les dossiers. En fait j'aimerai que mes app se lancent sur le SSD (pour bénéficier de la vitesse, etc) mais que les médias (par exemple pour un serveur xmpp, ou un partage de fichier) soit sur le disque dur. Sur le tuto je vois qu'iels parlent de "/home/yunohost.app" pour les "Données lourdes des applications YunoHost " et de "/home/yunohost.multimedia" pour "Données lourdes partagées entre plusieurs applications", mais ça reste flou pour moi

Si des personnes peuvent m'éclairer, aider ou guider je vous en serrais très reconnaissant !!! MERCI

#yunohost #autoHebergement #selfHost #nginx #fail2ban #aiscraper #aicrawler

@metin I moved from Github to a self-hosted Gitea instance that I self-host on OVH, a European cloud provider.

I also moved my .dev top-level domain from Google to EURid's .eu.

I'm very happy with these changes. I no longer have to put up with AI's obsession every time I did something on GitHub.

On top of that, I no longer use Cloudflare, and recently had to install Anubis (Web AI Firewall) to stop being attacked by AI Crawlers from openai and other Big Tech companies that were scanning all the commits on my Gitea (public repositories ofc).

#github #ovhcloud #eu #europe #eurid #gitea #aicrawler #cloudflare #selfhost #opensouce #privacy #firewall

🌐🌿 Sustainable web practices:

Disallowing web crawlers? Only allowing the most 2-3 sustainable web crawlers? Only getting visitors from direct recommendations? Is editing robots.txt enough?

What do you think?

#noBot #noBigTech #searchEngine #AICrawler #robotsTxt #sustainability #lowTech #solarPunk #slowWeb #smallWeb

Behold the AI bots that Cloudflare blocked from this blog

I don’t like writing for free–social media blatantly excepted–so when I watched a panel at Web Summit in mid-November about the effect of AI-model crawlers on news-site revenue and the Pay Per Crawl initiative that Cloudflare was proposing as a solution, I had to take notes.

Then a few weeks after I got home from Lisbon, I realized I could take action: While Pay Per Crawl remains in an invitation-only beta test, Cloudflare’s AI Crawl Control is open to the public and included in that Internet infrastructure firm’s free tier. Then I learned that it’s shockingly easy to add Cloudflare’s services to a WordPress.com blog.

Crawl Control comes with a preset list of bots to block and bots to allow, grouped by type: “AI Assistant” bots that take action in response to user requests are fine; “AI Search” bots that support “AI-driven search experiences” are also okay (contrary to Cloudflare CEO Matthew Prince’s discussion of them in that Web Summit panel); “AI Crawler” bots that collect content for training AI models are not.

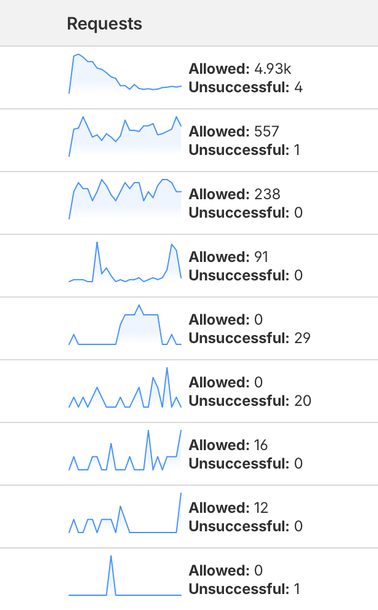

I took a screenshot of this part of my Cloudflare dashboard at almost the same time each afternoon this week, and these are my totals:

- Huawei’s PetalBot was the highest-volume AI crawler, with Cloudflare reporting 224 “unsuccessful” request attempts from that Chinese tech giant’s AI crawler (Cloudflare doesn’t take direct credit for blocking bots in this interface), followed by Anthropic’s Claude-SearchBot, with 165 unsuccessful requests.

- Among AI assistants, the second-highest category by volume, OpenAI’s ChatGPT-User had 1,251 allowed requests, DuckDuckGo’s DuckAssistBot had 36 allowed, and Perplexity’s Perplexity-User had one unsuccesful request.

- The top bot in AI search came from an unlikely place: Apple’s Applebot, with 734 allowed. OpenAI’s OAI-SearchBot was far behind, with 128 allowed requests, while Perplexity’s PerplexityBot had all eight request attempts fail.

To put this in context, the top two search engine crawlers had exponentially higher numbers. Google’s Googlebot somehow racked up a little over 20,000 requests, more than 30 times the presumably-human traffic I see in my WordPress dashboard here for the last five days, and 23 failed requests. Microsoft’s Bingbot came in second with 3,003 allowed requests and two unsuccessful ones.

As Cloudflare’s CEO complained in that Web Summit panel, Googlebot feeds into both Google’s traditional search and the AI Overview search results that Web publishers now blame for dangerous declines in their search traffic. There’s nothing I can do about that from this side of the screen except hope that Cloudflare’s Pay Per Crawl efforts and other advocacy efforts stir some rethinking at Google.

But I can’t tell you how well Pay Per Crawl works, because almost three weeks after applying to join the private beta I’m still waiting for my invitation. I imagine I’ll be waiting much longer before an AI-crawler operator decides that my tiny contribution to the Web’s collective content is worth sending me some money.

#AI #AIBot #AICrawlControl #AICrawler #Amazon #Applebot #Bingbot #ChatGPT #Cloudflare #Huawei #OpenAI #PayPerCrawl #Petalbot

**C Movement AI M'esclàz Threatens Publisher Revenue?**

Các nhà xuất bản phụ thuộc vào quảng cáo cómente rủi ro bách giá khi AI crawlers Eleanormg-web nhưng không tạo ra truy cập người. Các công ty lớn đang áp dụng giải pháp như Senthor để blocking hoặc cobs crawl. Nhu cầu lớn từ từng nhà công ty nhỏ vẫn ừa. Tags: #AIcrawler #DigitalMarketing #ContentStrategy

https://www.reddit.com/r/SaaS/comments/1o5sxvr/do_publishers_understand_whats_coming_with_ai/

10 giờ nghiên cứu về AI SEO crawlers: Công cụ crawl AI không chạy JavaScript, chỉ đọc HTML. Nội dung phải hiển thị phía server-side. Internal linking rất quan trọng, xây dựng cụm chủ đề rõ ràng. Sitemap, robots.txt vẫn cần thiết. Thêm file LLMs.txt mới. Kiểm tra cài đặt Cloudflare - nó có thể chặn bot AI mà bạn không biết.

#AIcrawler #SEOtips #DigitalMarketing #Tối_ưu_SEO #Công_cụ_AI

https://www.reddit.com/r/SaaS/comments/1nlxduj/i_spent_10_hours_researching_ai_seo_crawlers/

Anubis is toast?!

Codeberg (@[email protected])

However, we can confirm that at least Huawei networks now send the challenge responses and they actually do seem to take a few seconds to actually compute the answers. It looks plausible, so we assume that AI crawlers leveled up their computing power to emulate more of real browser behaviour to bypass the diversity of challenges that platform enabled to avoid the bot army.