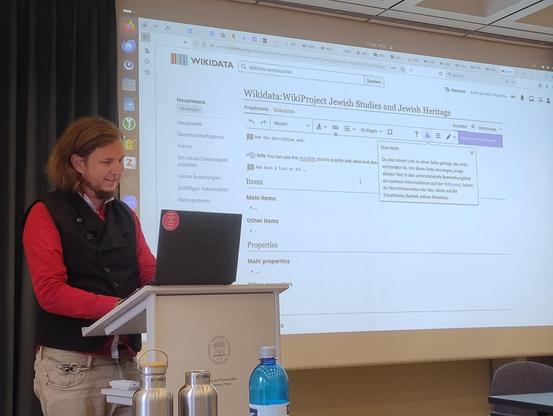

Heute und morgen treffen wir uns mit vielen spannenden Projekten an der wunderschönen #adwmainz zum Hands-on Workshop "#Wikidata für die Jüdischen Studien ".

Organisiert haben wir ihn gemeinsam mit dem FID Jüdische Studien (@JuedStudien), wir freuen uns auf den Austausch!

Diese #Briefe sind Gegenstand des Langzeitprojektes „Buber-Korrespondenzen Digital: Das Dialogische Prinzip in Martin Bubers Gelehrten- und

Intellektuellennetzwerken im 20. Jahrhundert“ (#BKD), das im Rahmen

des Akademienprogramms gefördert und an der #adwmainz und der #GoetheUniversität Frankfurt umgesetzt wird.

Mehr über Buber, sein Schaffen und Wirken, seine Briefe und das Projekt erfahrt Ihr zukünftig auch auf unserem Instagram-Kanal https://www.instagram.com/buber.korrespondenzendigital/

🧵 3/3

Heute ist Girls' Day an der Akademie der Wissenschaften und der Literatur Mainz. Mit 15 Schüler:innen programmieren wir auf Raspberry Pis eine kleine Wikidata-Webanwendung nach, die nach Museen, Parks und Schulen in der Nähe sucht 👩💻

https://gitlab.rlp.net/adwmainz/digicademy/miscellaneous/around-me

Live-Version: https://around-me-40e659.pages.gitlab.rlp.net/

#ADWMainz #DigitalHumanities #GirlsDay #GirlsDay2025 #RaspberryPi #Wikidata

Hallo #Fediverse,

wir sind das Projekt "Buber-Korrespondenzen Digital" (#BKD) und wir sind #neuhier!

Interessiert ihr euch für Martin #Buber oder dafür, wie man etwa 43.000 #Briefe für Wissenschaft und andere Interessierte digital zugänglich macht? Hier informieren wir ab jetzt über unsere Aktivitäten, geben Einblicke in unsere Arbeit und halten euch auf dem Laufenden.

#Introduction #Briefedition #DigitalHumanities #DH #DigitalScholarlyEdition #MartinBuber #ADWMainz #UniFrankfurt

Major milestone in digital cuneiform studies: Researchers from Mainz, Marburg, and Würzburg present an innovative tool with many new possibilities for Hittite studies 👉 https://press.uni-mainz.de/cuneiforms-new-digital-tool-for-researchers/ @dfg_public

#cuneiform #hittitology #Hittite #CuneiformText #CuneiformResearch #CuneiformStudies #TextEdition #Transliteration #UniMainz #UniMarburg #UniWürzburg #AdWMainz

Meilenstein für digitale Keilschriftforschung: Wissenschaftler*innen aus Mainz, Marburg und Würzburg stellen innovatives Werkzeug vor, das viele neue Möglichkeiten in der Hethitologie bietet 👉 https://presse.uni-mainz.de/keilschrift-neues-digitales-werkzeug-fuer-die-forschung/ @dfg_public

#Keilschrift #Hethitologie #Hethitisch #Keilschriftforschung #Textedition #Transliteration #UniMainz #UniMarburg #UniWürzburg #AdWMainz

Da kommt das ungemütliche Regenwetter den 🧩 E-Science-Tagen 2025 🧩 ja fast gelegen:

🔜 Drinnen sprechen Tabea Tietz und Jonatan Jalle Steller um 16.20 über "Knowledge Graph-based Research Data Integration for NFDI4Culture and Beyond".

Wir freuen uns auf euch!

Wer nicht selbst kommen kann oder es nochmal ganz genau wissen möchte, findet hier die Präsentation https://zenodo.org/records/14989011.

^sh

#NFDI4Culture #NFDIrocks #fizkarlsruhe #adwmainz #Escitage @jonatan @tabea

Knowledge Graph-based Research Data Integration for NFDI4Culture and Beyond

Each NFDI consortium establishes research data infrastructures tailored to its specific domain. To facilitate interoperability across different domains and consortia, the NFDIcore ontology has been developed [1]. It serves as a mid-level ontology to represent metadata about NFDI resources, e.g. agents, projects, data portals, etc. NFDIcore establishes mappings to an array of standards across domains, including the Basic Formal Ontology, schema.org, DCTERMS, and DCAT. For domain-specific research questions, NFDIcore is extended following a modular approach, as e.g., with the NFDI-MatWerk ontology (MWO), the NFDI4DataScience ontology (NFDI4DSO), the NFDI4Memory ontology (MO), and the NFDI4Culture ontology (CTO). CTO represents resources within the NFDI4Culture domains Architecture, Musicology, Art History, Media Science, and the Performing Arts. The ontology addresses domain-specific research questions, connects diverse cultural entities, and facilitates the efficient organization, retrieval, and analysis of cultural data. The interconnection of NFDI consortia by means of Linked Open Data (LOD) opens up new research horizons. Hence, a workflow, which includes data discovery, harvesting, preprocessing, mapping, and integration into a KG is required, which is described on the use case of the NFDI4Culture KG. The NFDI4Culture KG acts as a single point of access to various decentralized research data resources and aggregates diverse and isolated data from the research domain, enabling discoverability, interoperability and reusability of CH data. The KG consists of the Research Information Graph (RIG), describing metadata such as publishers, standards, and licenses, and the Research Data Graph (RDG), interconnecting the content metadata provided by data portals. Taking into account the challenges and objectives of NFDI4Culture to aggregate a diverse landscape of CH research data, we have designed a Python package of reusable LOD components, harvesters using these components, a SPARQL endpoint explorer (shmarql), and an ETL (Extract, Transform, Load) environment[2]. The latter consists of six modular workflow components, adaptable for independent use or within a comprehensive, automated ingest routine: 1: Run harvest routines. This uses RDF-based action files with schema.org step definitions to scrape data with external tools, link the feed to its RIG metadata, and generate persistent resource identifiers. To ensure harmonization, Python-based transformations convert resources in common cultural-heritage data formats into nfdicore/cto triples when needed. 2: Clean harvested data. To ensure harmonization between the harvested data feed and its associated action file, triples representing the harvesting state are added or deleted. 3: Commit harvest state. Changes made by a harvesting run are pushed to the pipeline’s own repository to ensure up-to-date action files. 4: Prepare and index data. If there are changes in a data feed, data directories are automatically updated or created and search indexes are produced. 5: Build a new endpoint. To prevent downtimes, a new SPARQL endpoint container is built while the previous version remains available. Once the new endpoint becomes operational, the old container is stopped and removed. 6: Publish statistics. Statistics about the integrated data feeds are published in a dashboard. It supports data analysis and visualizations based on provided SPARQL queries. [1] https://ise-fizkarlsruhe.github.io/nfdicore/ [2] https://gitlab.rlp.net/adwmainz/nfdi4culture/knowledge-graph/culture-kg-kitchen

🤩 NFDI4Culture is currently represented at the EOSC Symposium 2024 (https://eosc.eu/symposium2024/) in Berlin with a joint poster by #adwmainz and #fizkarlsruhe on the topic

📄 ‘Finding interdisciplinary connections - Implementing FAIR research information in NFDI4Culture’.

#NFDIrocks #NFDIcore @NFDI #EOSC #EOSCSymposium2024 #NFDI #NFDI4Culture #fizkarlsruhe #adwmainz @fiz_karlsruhe #CultureInformationPortal @lysander07 @tabea @sourisnumerique @jonatan @epoz @fiz_karlsruhe @heikef

^gp (AB)