#statstab #543 How hazard ratios can mislead and why it matters in practice

Thoughts: Another *insert effect size measure* has issues paper. If you use HRs you better study up.

#observational #hazardratio #effectsize #misconceptions

#causalinference

https://link.springer.com/article/10.1007/s10654-025-01250-9

How hazard ratios can mislead and why it matters in practice - European Journal of Epidemiology

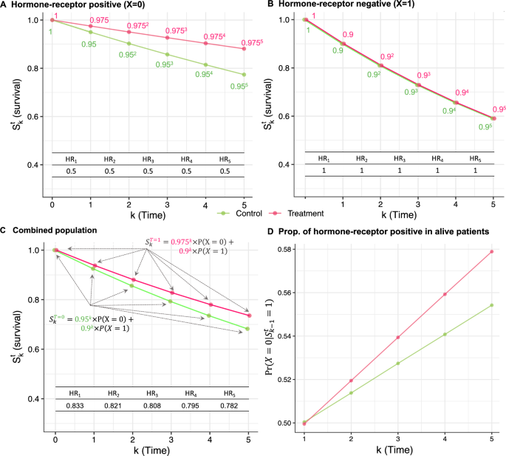

Hazard ratios are routinely reported as effect measures in clinical trials and observational studies. However, many methodological works have raised concerns about the interpretation of hazard ratios as causal effects. These concerns are often related to three points: (i) depletion of susceptible individuals leads to selection bias and complicates the causal interpretation of the hazard ratio, (ii) the hazard ratio is not collapsible, and (iii) the conventional proportional hazards assumption rarely holds in medical studies. We discuss the relation between these three points. We ground our presentation on an example about effect of endocrine therapy in reducing the risk of recurrence or death in a population of patients with breast cancer. We also describe why survival curves and risk differences do not exhibit any of the undesirable properties of hazard ratios.