#NewPaper out: Twenty-five years of Neobiota: building a community for invasion science in Europe and beyond by Ingo Kowarik and many great colleagues @TheresaHenke

Twenty-five years of Neobiota: building a community for invasion science in Europe and beyond

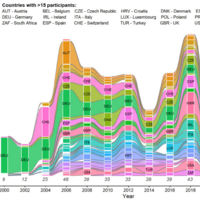

While the influential role of scientific associations in shaping research fields is well recognized, their impact within the domain of invasion science remains underexplored. This review combines qualitative narrative with quantitative metrics to trace the development of Neobiota, the first European non-profit and non-governmental scientific association dedicated to the study of biological invasions. Since its foundation in 1999, the Neobiota network has aimed to foster scientific exchange and collaboration, advance and integrate research across all dimensions of invasion science, and disseminate findings to support evidence-based policies. Evolving rapidly from a German working group into Europe’s leading network for invasion science, Neobiota has united researchers and practitioners across disciplines, taxa, and national boundaries. In contrast to many other associations, Neobiota operates with a low-threshold, non-bureaucratic governance model, promoting inclusivity and cross-border engagement. We document how its biennial conferences—hosted in 12 European countries between 2000 and 2024—have supported thematic diversity (ranging from molecular biology to socio-economy), increasing international participation (9–47 countries globally), and active policy outreach. Thematically, the focus of research presented at conferences has shifted over time from ecological patterns and species inventories to environmental impacts, predictive modeling, management strategies, and socio-political dimensions of invasions. Trends in equity reveal progress toward gender parity among keynote speakers and session chairs. Neobiota has also supported the dissemination of knowledge through two publication platforms: the Neobiota series (2000–2008) and the open-access, peer-reviewed journal NeoBiota launched in 2011. This journal currently operates under an Editorial Board with 75 subject editors and a team of five Co-Editors-in-Chief. We conclude that over the past 25 years, Neobiota has been instrumental in building a cohesive and inclusive scientific community globally, advancing and disseminating interdisciplinary research on biological invasions, and informing policy, particularly in support of legal frameworks to prevent and mitigate the negative impacts of non-native species.