Representation learning often emphasizes metric preservation. We instead build Symplectic structural invariance directly into the representation.

https://arxiv.org/abs/2512.19409

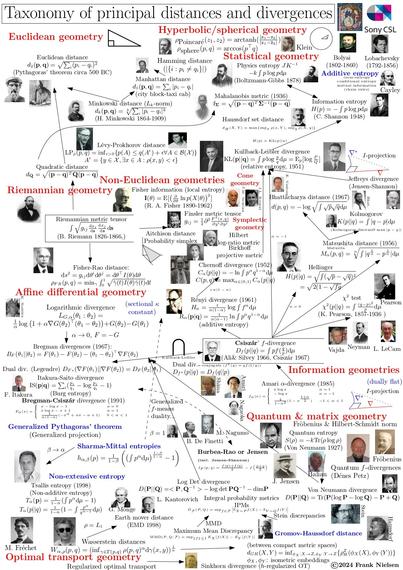

We embed Hamiltonian/symplectic geometry by making the RNN state dynamics a symplectomorphism, which preserves Legendre duality (information geometry) through time. This yields structure-preserving representations enforced by the latent dynamics, rather than imposed indirectly via the output.

#ReservoirComputing #RepresentationLearning #InformationGeometry #SymplecticGeometry #HamiltonianDynamics #GeometricDeepLearning #DynamicalSystems #PhysicsInformedML

Symplectic Reservoir Representation of Legendre Dynamics

Modern learning systems act on internal representations of data, yet how these representations encode underlying physical or statistical structure is often left implicit. In physics, conservation laws of Hamiltonian systems such as symplecticity guarantee long-term stability, and recent work has begun to hard-wire such constraints into learning models at the loss or output level. Here we ask a different question: what would it mean for the representation itself to obey a symplectic conservation law in the sense of Hamiltonian mechanics? We express this symplectic constraint through Legendre duality: the pairing between primal and dual parameters, which becomes the structure that the representation must preserve. We formalize Legendre dynamics as stochastic processes whose trajectories remain on Legendre graphs, so that the evolving primal-dual parameters stay Legendre dual. We show that this class includes linear time-invariant Gaussian process regression and Ornstein-Uhlenbeck dynamics. Geometrically, we prove that the maps that preserve all Legendre graphs are exactly symplectomorphisms of cotangent bundles of the form "cotangent lift of a base diffeomorphism followed by an exact fibre translation". Dynamically, this characterization leads to the design of a Symplectic Reservoir (SR), a reservoir-computing architecture that is a special case of recurrent neural network and whose recurrent core is generated by Hamiltonian systems that are at most linear in the momentum. Our main theorem shows that every SR update has this normal form and therefore transports Legendre graphs to Legendre graphs, preserving Legendre duality at each time step. Overall, SR implements a geometrically constrained, Legendre-preserving representation map, injecting symplectic geometry and Hamiltonian mechanics directly at the representational level.