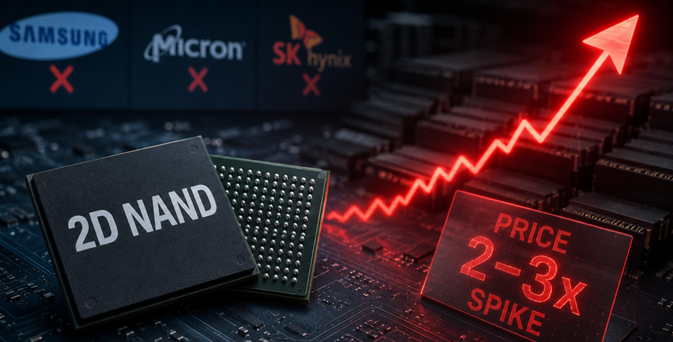

New: HBM Memory Is the Next GPU Constraint

HBM is sold out through 2026 at every supplier. Prices up 20%. Every AI GPU needs it. No substitute.

https://telegra.ph/AIs-Hidden-Tollbooth-Why-HBM-Memory-Is-the-Next-GPU-Constraint-05-15

AI's Hidden Tollbooth: Why HBM Memory Is the Next GPU Constraint

NVIDIA reports Q1 FY2027 earnings on May 20. The consensus is watching Blackwell ramp and gross margins. But there is a structural constraint beneath both of those numbers that the market is not pricing: HBM memory. High Bandwidth Memory is the single most constrained component in the AI supply chain. Every AI GPU — H100, H200, B200, B300 — requires it. There is no substitute. And every supplier is sold out through 2026. This is not a repeat of the 2021 chip shortage. It is a structural reallocation of the…