Small making-of of my “True Beauty Is So Painful” piece (listening to “True Beauty Is So Painful” by Oomph! in the background), because “AI art = just pressing a button” is still a thing.

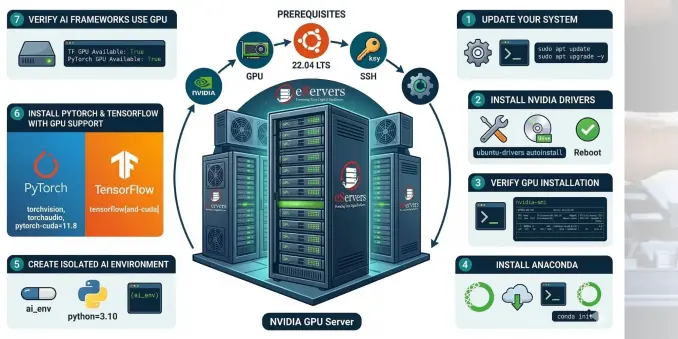

Here I’m showing briefly (15 MB max file upload) my SDXL workflow in ComfyUI, from node structure to model choice to parameters.

LoRAs in this setup are only linked to the positive prompt, because I wanted to fine-tune their weights there specifically, without affecting the negative prompt.

During rendering, I ran in parallel:

- GPU load with radeontop, you can clearly see how on RDNA2 everything (matrix multiplications, convs, etc.) runs over the shaders

- Temperatures & power states briefly shown with corectrl

Peak at 187 W, hotspot briefly at 97 °C

RDNA2 doing RDNA2 things…

Video workflow:

- recorded with OBS

- edited in Kdenlive

- transcoded with VAAPI (H.264)

No cloud, just decisions, iteration and real hardware.

Everything runs on Linux + ComfyUI (FOSS), so anyone can set this up.

No GPU? No problem, you can also run it using PyTorch’s CPU backend, just much slower.

#AIArt #ComfyUI #SDXL #stablediffusion #LoRA #FOSS #Linux #AMD #RDNA2 #GPUComputing #OpenSource #AIWorkflow #OBS #Kdenlive #VAAPI #DigitalArt #MakingOf #AIProcess #NoCloud