Eye contact is not even limited to looking directly into the camera at all.

Eye contact is whenever there is at least one eye anywhere in the image. No matter where it is. No matter how small the eye and how big the image is.

Ask autistic people, and they'll likely confirm. And they'll also likely confirm that it triggers them.

In fact, eye contact is even when you, as a neurotypical person, cannot even see the eye because it's less then a pixel.

Imagine an image of 20 megapixels. Now imagine there's a person somewhere in the image, only four pixels high and about one pixel wide. This means the head is half a pixel high and a third of a pixel wide.

Even if the person is looking directly at the camera, this still means that each individual eye is 1/15 of a pixel wide and maybe 1/30 of a pixel high. That's 1/450 or a bit over 0.2% of a pixel. That's about 1/9,000,000,000 or a bit over 0.000,000,01% of the whole image. If the person is looking directly at the camera.

Nonetheless, this may trigger some autistic people even if the person is not even looking into the general direction of the camera.

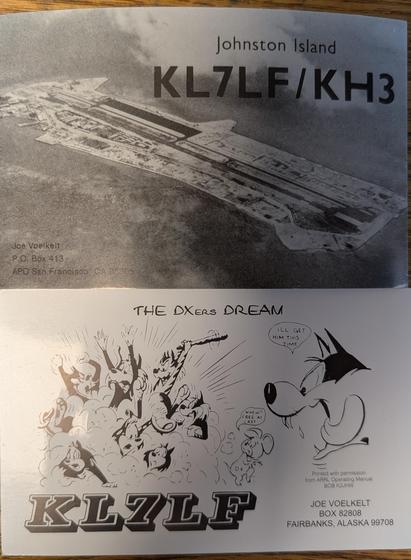

It doesn't even have to be a person. It may just as well be an animal or a fantasy creature or a robot or a sculpture or a stylised face or even only a single stylised eye.

I've actually had all this confirmed by @Yohan Yukiya Sese Cuneta 사요한🦣 who knows enough actually diagnosed autistic people to know.

So it doesn't matter how big or infinitely small the eye is. It doesn't matter where it's looking. If there's at least one eye in your image, it counts as eye contact.

If you, as the user who posts the image, know for certain that there is at least one eye in the image, you're obliged to

- have the image automatically blanked or blurred

- make sure that Mastodon will blank the image, too

- add the content warning "CW: eye contact" to your post

- add the hashtags #EyeContact and #CWEyeContact to your post, especially the former which some people out there may have filtered

You're only excused not to do so if you yourself honestly don't know that there is at least one eye in the image.

#Long #LongPost #CWLong #CWLongPost #FediMeta #FediverseMeta #CWFediMeta #CWFediverseMeta #CW #CWs #CWMeta #ContentWarning #ContentWarnings #ContentWarningMeta #Hashtag #Hashtags #HashtagMeta #CWHashtagMeta #EyeContactMeta #CWEyeContactMeta #Autism #Autistic #Neurodivergent #Neurodivergence #Inclusion #Inclusivity #A11y #Accessibility

🍵

🍵