🎙️ Join Federico’s Discord talk later today!

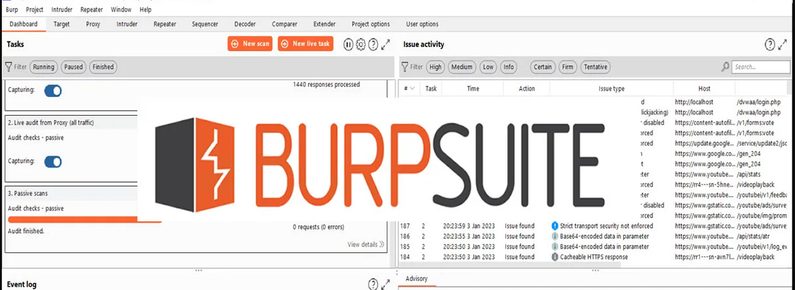

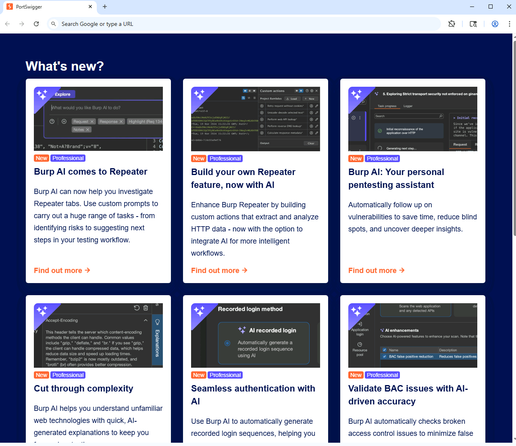

As part of #BurpExtensibilityMonth initiatives, our Research Lead and #BurpAmbassador @apps3c is joining #PortSwigger on Discord for “Restoring testability: Handling complex scenarios in Burp Suite with a custom extension”.

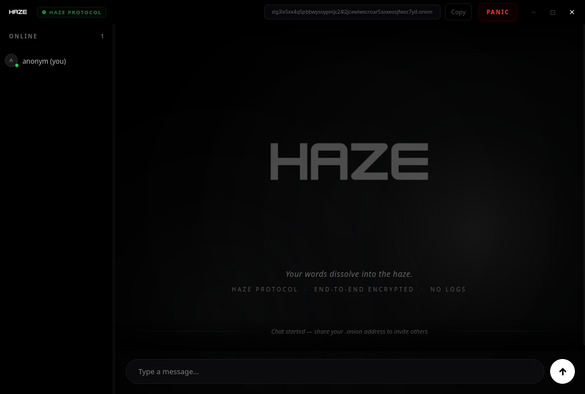

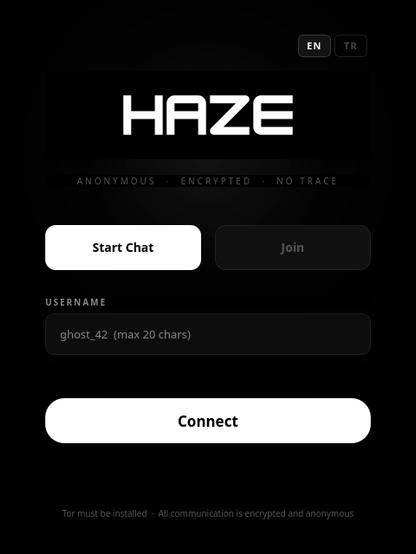

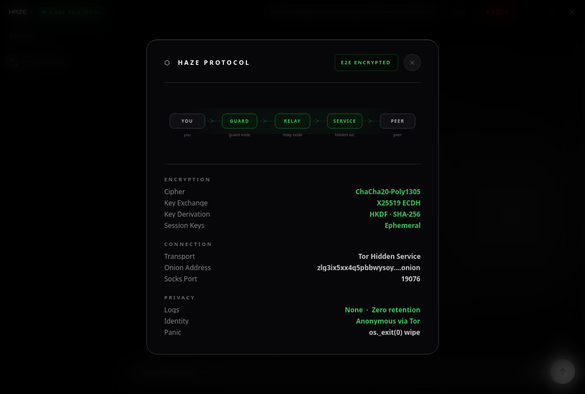

Most web and mobile backends and APIs can be assessed effectively with #BurpSuite out of the box. But testers sometimes hit scenarios where standard workflows become impractical, such as encryption, request signing, custom data formats, WAF controls, token handling, and other protections.

In this talk, Federico will explore how custom Burp Suite extensions can integrate those mechanisms directly into your testing workflow, so you can keep using tools like Repeater, Intruder, Scanner, and more as if the underlying complexity was not there.

Expect a real-world inspired scenario, practical design guidance, and plenty of extension-building inspiration.

👉 Register your interest here!

https://discord.com/events/1159124119074381945/1499761261750128670