Autoregressive next token prediction and KV Cache in transformers

#HackerNews #autoregressive #transformers #kv-cache #machine-learning #deep-learning #AI

Autoregressive next token prediction and KV Cache in transformers

#HackerNews #autoregressive #transformers #kv-cache #machine-learning #deep-learning #AI

The Truth Lies Somewhere in the Middle (Of the Generated Tokens)

본 연구는 오토리그레시브 언어 모델이 생성하는 토큰들의 히든 스테이트를 단일 표현으로 합치는 최적 방식을 탐구한다. 특히, 생성된 토큰들의 히든 스테이트를 평균(pooling)하는 방식이 마지막 토큰만 사용하는 것보다 입력의 의미를 더 잘 포착함을 발견했다. Qwen3-14B 모델을 활용해 이미지-텍스트 데이터셋에서 시각적 임베딩과의 정렬도를 CKA 지표로 측정했으며, 평균 풀링된 임베딩이 생성 과정에서 점차 정렬도가 높아지는 경향을 보였다. 이는 LLM의 내부 표현을 해석하고 활용하는 데 중요한 인사이트를 제공한다.

https://www.sophielwang.com/tokens

#autoregressive #hiddenstates #embedding #representation #llm

End-to-End Autoregressive Image Generation with 1D Semantic Tokenizer

이 논문은 이미지 생성용 1D 시맨틱 토크나이저를 활용한 엔드투엔드 자기회귀 이미지 생성 방식을 제안한다. 기존의 토크나이저와 생성 모델을 별도로 학습하는 2단계 접근법과 달리, 재구성과 생성 과정을 공동 최적화하여 생성 결과로부터 토크나이저를 직접 지도한다. 또한 비전 파운데이션 모델을 활용해 1D 토크나이저 성능을 향상시키는 방법도 탐구했다. 제안된 모델은 ImageNet 256x256 이미지 생성에서 FID 1.48이라는 최첨단 성능을 기록했다.

https://arxiv.org/abs/2605.00503

#autoregressive #imagegeneration #tokenizer #visionfoundationmodel #computervision

Autoregressive image modeling relies on visual tokenizers to compress images into compact latent representations. We design an end-to-end training pipeline that jointly optimizes reconstruction and generation, enabling direct supervision from generation results to the tokenizer. This contrasts with prior two-stage approaches that train tokenizers and generative models separately. We further investigate leveraging vision foundation models to improve 1D tokenizers for autoregressive modeling. Our autoregressive generative model achieves strong empirical results, including a state-of-the-art FID score of 1.48 without guidance on ImageNet 256x256 generation.

Meituan LongCat (@Meituan_LongCat)

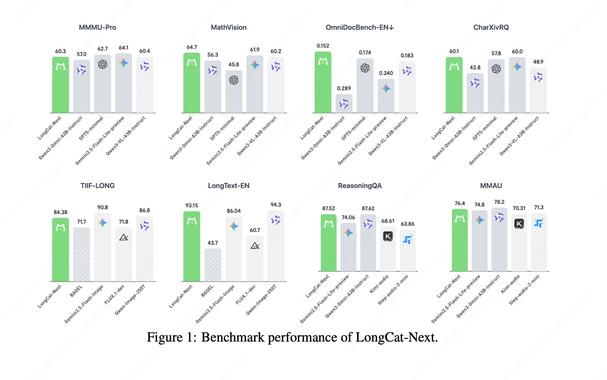

LongCat-Next라는 새로운 디스크리트 네이티브 오토리그레시브 멀티모달 모델이 발표됐다. 언어, 비전, 오디오를 하나의 통합 모델로 결합해 네이티브 멀티모달리티와 산업용 수준의 성능을 제공하는 것이 특징이다.

🔥 Introducing LongCat-Next: A Discrete Native Autoregressive Multimodal Model LongCat-Next integrates language, vision, and audio into a unified discrete autoregressive model, extending Next-Token Prediction to native multimodality and delivering industrial-strength performance

apolinario (@multimodalart)

BitDance라는 이름의 14B 파라미터 자동회귀(autoregressive) 이미지 생성 모델이 공개되었다는 공지입니다. 이 모델은 코드북이 아닌 'bits' 단위로 자동회귀하며, 14B 규모 치고 빠르게 동작한다고 설명합니다. Hugging Face 스페이스에서 직접 체험해볼 수 있다는 링크를 함께 제공합니다.

Hầu hết LLM như GPT, Claude, Gemini dùng mô hình tự hồi quy: tạo token từng cái → gây độ trễ, chi phí cao. Mô hình ngôn ngữ diffusion bắt đầu với câu trả lời nhiễu và tinh chỉnh toàn bộ chuỗi trong vài bước song song, giảm latency 5‑10× và chi phí. Dù khó đào tạo và cần hạ tầng mới, nhưng rất hứa hẹn cho các ứng dụng thời gian thực (code autocomplete, trợ lý trong sản phẩm). #LLM #AI #Diffusion #Autoregressive #AIVietnam #TríTuệNhânTạo

The efficiency of large language models (LLMs) is fundamentally limited by their sequential, token-by-token generation process. We argue that overcoming this bottleneck requires a new design axis for LLM scaling: increasing the semantic bandwidth of each generative step. To this end, we introduce Continuous Autoregressive Language Models (CALM), a paradigm shift from discrete next-token prediction to continuous next-vector prediction. CALM uses a high-fidelity autoencoder to compress a chunk of K tokens into a single continuous vector, from which the original tokens can be reconstructed with over 99.9\% accuracy. This allows us to model language as a sequence of continuous vectors instead of discrete tokens, which reduces the number of generative steps by a factor of K. The paradigm shift necessitates a new modeling toolkit; therefore, we develop a comprehensive likelihood-free framework that enables robust training, evaluation, and controllable sampling in the continuous domain. Experiments show that CALM significantly improves the performance-compute trade-off, achieving the performance of strong discrete baselines at a significantly lower computational cost. More importantly, these findings establish next-vector prediction as a powerful and scalable pathway towards ultra-efficient language models. Code: https://github.com/shaochenze/calm. Project: https://shaochenze.github.io/blog/2025/CALM.

Continuous Autoregressive Language Models

https://arxiv.org/abs/2510.27688

#HackerNews #Continuous #Autoregressive #Language #Models #NaturalLanguageProcessing #AI #Research #MachineLearning #TransformerModels

The efficiency of large language models (LLMs) is fundamentally limited by their sequential, token-by-token generation process. We argue that overcoming this bottleneck requires a new design axis for LLM scaling: increasing the semantic bandwidth of each generative step. To this end, we introduce Continuous Autoregressive Language Models (CALM), a paradigm shift from discrete next-token prediction to continuous next-vector prediction. CALM uses a high-fidelity autoencoder to compress a chunk of K tokens into a single continuous vector, from which the original tokens can be reconstructed with over 99.9\% accuracy. This allows us to model language as a sequence of continuous vectors instead of discrete tokens, which reduces the number of generative steps by a factor of K. The paradigm shift necessitates a new modeling toolkit; therefore, we develop a comprehensive likelihood-free framework that enables robust training, evaluation, and controllable sampling in the continuous domain. Experiments show that CALM significantly improves the performance-compute trade-off, achieving the performance of strong discrete baselines at a significantly lower computational cost. More importantly, these findings establish next-vector prediction as a powerful and scalable pathway towards ultra-efficient language models. Code: https://github.com/shaochenze/calm. Project: https://shaochenze.github.io/blog/2025/CALM.

Diffusion Beats Autoregressive in Data-Constrained Settings

https://blog.ml.cmu.edu/2025/09/22/diffusion-beats-autoregressive-in-data-constrained-settings/

#HackerNews #Diffusion #Autoregressive #MachineLearning #DataScience #AIResearch

Check out our new blog post on "Diffusion beats Autoregressive in Data-Constrained settings". The era of infinite internet data is ending. This research paper asks: What is the right generative modeling objective when data—not compute—is the bottleneck?

WorldVLA: Towards Autoregressive Action World Model

https://arxiv.org/abs/2506.21539

#HackerNews #WorldVLA #Autoregressive #Action #World #Model #AI #Research #Machine #Learning

We present WorldVLA, an autoregressive action world model that unifies action and image understanding and generation. Our WorldVLA intergrates Vision-Language-Action (VLA) model and world model in one single framework. The world model predicts future images by leveraging both action and image understanding, with the purpose of learning the underlying physics of the environment to improve action generation. Meanwhile, the action model generates the subsequent actions based on image observations, aiding in visual understanding and in turn helps visual generation of the world model. We demonstrate that WorldVLA outperforms standalone action and world models, highlighting the mutual enhancement between the world model and the action model. In addition, we find that the performance of the action model deteriorates when generating sequences of actions in an autoregressive manner. This phenomenon can be attributed to the model's limited generalization capability for action prediction, leading to the propagation of errors from earlier actions to subsequent ones. To address this issue, we propose an attention mask strategy that selectively masks prior actions during the generation of the current action, which shows significant performance improvement in the action chunk generation task.