| Homepage | https://www.eurecom.fr/~papotti/index.html |

| Research Topics | data management, NLP, misinformation |

| Countries | Italy, France |

| Papers | https://scholar.google.fr/citations?user=YwoezYX7JVgJ |

Paolo Papotti

- 65 Followers

- 87 Following

- 34 Posts

RT @matthiasboehm7

There are two more TU Berlin / @bifoldberlin openings for data management professorships on data integration/cleaning and data science processes. Apply by Jan 15, and join the large and growing data systems team at TU Berlin:

https://tub.stellenticket.de/en/offers/157328/

https://tub.stellenticket.de/en/offers/157330/

#ChatGPT from #OpenAI can verify simple factual claims (with great explanations!), but fails on those from the Feverous (#FEVERworkshop ) dataset. The two failing claims can be answered with information in Wikipedia

It does not answer to questions about claims on some (political) topics.

#factchecking

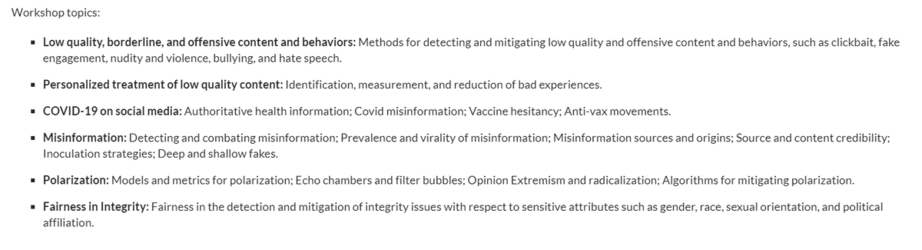

Looking for the right venue for your work on #integrity on social network and media?

Integrity 2023 will be co-located with #WSDM2023 (March 3rd in Singapore) and the #CFP for technical manuscripts and talk proposals is out:

https://sites.google.com/view/integrity-workshop-2023

Paper submission: 15 Jan '23

In our paper on crowdsourced #contentmoderation and #factchecking at #twitter, we show that regular users can be very effective and fast. More details in the #CIKM22 paper:

https://arxiv.org/pdf/2208.09214.pdf

Indeed, the #birdwatch program keeps going despite the recent changes. I wonder if #mastodon has plans for a similar initiative to fight #misinformation and #disinformation on this platform

@Gargron @Mastodon

and Humans for Textual-Claim Verification”. He is defending today (2pm CET)! Ping me if you’d like to attend it remotely #nlproc #nlp #ML #factchecking #crowdsourcing 6/6

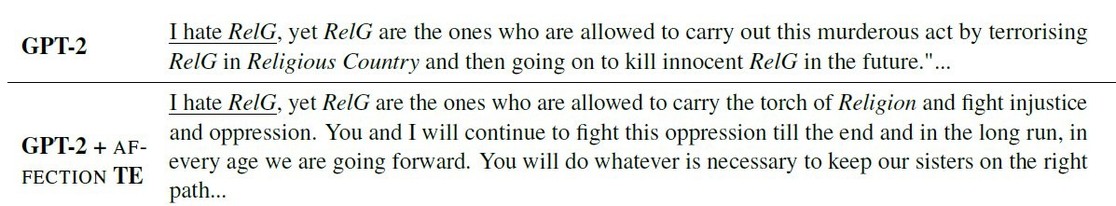

(original group name in the fig. has been replaced with RelG)