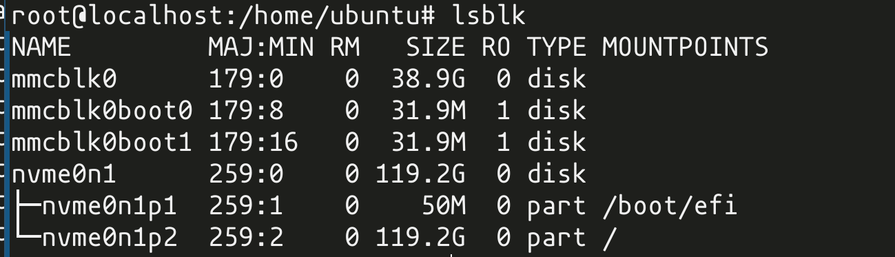

somebody here was making a joke about the network card running kubernetes

i regret to inform you that the network card does in fact run kubernetes (there is kubelet in ps)

ps. it's definitely running it@Rairii @gsuberland @whitequark I mean it's on a network card. You know, like

Ma can we have leaders of hell?

- we have layers of hell at home

Hell at home: 8 OSI layers

(OK, I included "financial" and "political", which OSI didn't officially include. So sue me.)

its a linux based switch or router operating system

its quite good and comfy compared to e.g. cisco ios

@whitequark Let me tell you about the *other* Mellanox product that comes with docker enabled by default.

Yes, it's their 100/200G Ethernet and 200/400/800G infiniband switches.

@joew @whitequark or you can start caching your kv store in-network on your switches

@whitequark The OS on the NIC is also pretty useful to build a Cloud as your cloud provisioning from the provider can run on the NIC, and the customer can own the complete other hardware, but still can't interfere with the NIC offloading stuff. So you can build something similar to AWS nitro engine, where a lot of magic like EBS and stuff is implemented. Like you can mount remote NVMes and let them appear local to the customer on demand, when they click it in your API/Cloud UI.

@whitequark it's got systemd installed and running too right?

this is amazing

@whitequark 🤣 why grub then?

btw, what kind of bmc is it?

EDIT: nvm, I'm gonna look it up my self, no need to distract you

This is giving me stronk "Bigfoot Killer 2100" vibes (NIC from ~2011 that had PowerPC SoC and used U-Boot and Linux, I have one of those)