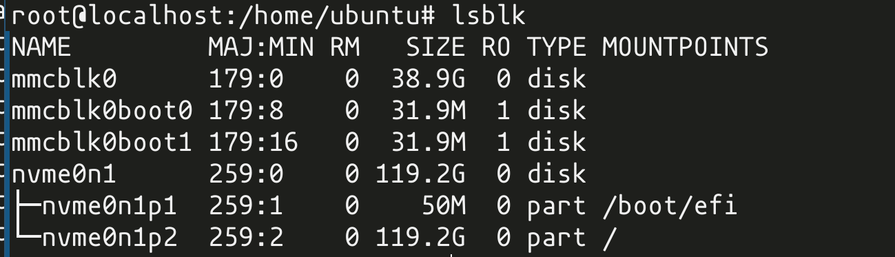

the network card i'm poking at, which has two different CPU clusters with Linux running on it, apparently has eMMC and NVMe drives onboard

also it has docker^W containerd installed by default

the operating system is booted by grub??

somebody here was making a joke about the network card running kubernetes

i regret to inform you that the network card does in fact run kubernetes (there is kubelet in ps)

@whitequark at first I was surprised then remembered ISPs/carriers. Due to how modern networking systems work (think 5g), they run a lot of service stuff in containers and sticking it on the NIC is probably giving faster network connectivity by bypassing PCIe to CPU translations.

@lethedata the bf-3 is mostly for AI stuff as far as i know. also like. the CPU on this thing is connected to the NIC (the actual NIC part of NIC) over... maybe PCIe, maybe AMBA? not sure. some sort of bus. but you're definitely going to still have NIC to CPU translations, is my point

@whitequark I'd have to look more into that hardware (I was thinking it was a Broadcom BCM95750X or the like) but my hunch is it's hardware offloading the entire network stack to NIC PCIe cards that can fully act as nodes of the cluster. Pin those network service pods to those PCIe cards and offload the entire network speeding it up.

@lethedata @whitequark I was going to joke that kube was overkill and overhead for a dedicated packet-pushing device, some modern dev FOMO shit that makes everything more complicated and slower than it needs to be (for ~SCALABILITY~) but that makes sense, the more you can push down into the ASIC on the NIC the less CPU you have to burn, freeing it for workload.