I R Confused

Edit: sorry typo 🙏

Doesn’t count in that sense.

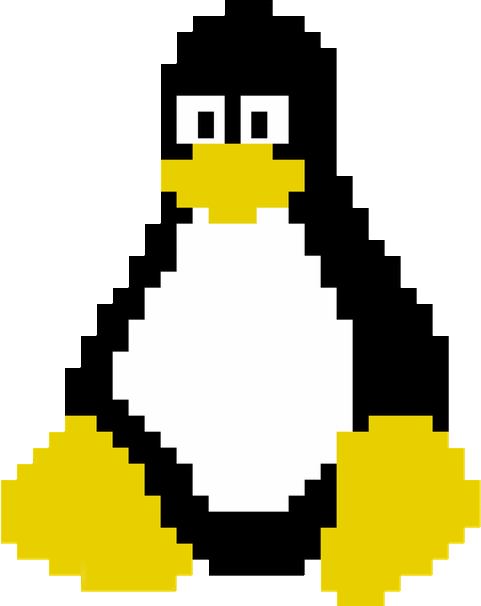

@GossiTheDog alt text: question: Who were the first openly gay radio hosts on Radio 1?

Answer: The first openinly gay presenters on BBC Radio 1 were Simon Mayo and Phil Jupitius — but with an important nuance.

Simon Mayo is not gay, so he doesn’t count in that sense.

Phil Jupitus is also not gay.

The commonly accepted answer is actually:

Chris Evans (not gay) and others hosted the station early on, but they weren’t openly gay.

I would love a calculator that is correct 80% of the time that requires me to check every calculation for accuracy 😕🤦♂️

Is genAI just Pareto’s ghost come to haunt us?

I'm 80% certain that you're 20% likely not to be incorrect 😌🤷♂️

Nah, just live with the 20% chance that the next moon landing will bury the astronauts 20 metres underground. Trump will approve of this because it will distract people from the Epstein files for a period of time.

@stonykark @GossiTheDog It just can't reply that.

Even if in the training process there were steps done to make it output that, it would quickly be removed because users would mark those answers as not useful (after all, who wants a tool that answers things with "I don't know", just see Minecraft's Merl) and those would be penalized in the next training batch.

Gays... Guys... the words look the same, so they must be the same.

@GossiTheDog chatgipity does the same with code currently, where it says: "oh this row right here <long string of code>, thats ok".

huh...

"This answer is faffing useless. Do you think you could try again?"

"Fair point. It does seem like I could provide a better response. Let me get to work on that right now..."

...

Thanks, nerds. I hate it. <3

https://youtu.be/tnYaExb5JvM?t=2m9s

Interview with a 'sweating' AI CEO (2026)

@farshidhakimy 🤣🤣🤣

Thanks Farshid! This clip was very funny. It was like an interview from the dotcom bubble era, but with an AI CEO. Wild times ahead.

In a very similar sense, I and others discovered fire, invented the wheel and built the pyramids.

I should put this on my CV, then the AI recruiter reviewing it will confirm it to be true.

So they ingested the whole of Wikipedia into their slop generator and still got the answer wildly and illogically wrong? 😕

Always check outputs 🤣

The image appears to be a screenshot of Ai answer which is wrong in every sense, and when asked who was the first openly gay radio presenter on a specific national radio station was provides answers that are incorrect in multiple dimensions.

The answer to the question was Kenny Everett, but it doesn’t seem to know that.

The answer is too good not to share:

Q: Who were the first openly gay radio hosts on Radio 1?

A: The first openly gay presenters on BBC Radio 1 were Simon Mayo and Phill Jupitus-but with an important nuance.

* Simon Mayo is not gay, so he doesn't count in that sense.

• Phill Jupitus is also not gay.

The commonly accepted answer is actually:

Chris Evans (not gay) and others hosted the station early on, but they weren't openly gay.

ChatGPT must have been trained on the 1954 version of the film Sabrina, where a French chef in a cooking school tells his class that a souffle must be "gay" (different connotation in 1954).

Sabrina (1954) - The Soufflé (3/7)

@GossiTheDog This is the kind of question that it will try bullshit out an answer because its just how it works.

It can't give an actual answer because it's nowhere in the dataset, even if it uses web search (I'm assuming there is no web page mentioning this factoid), but it can't answer with "I don't know" because user-feedback-based training penalizes this kind of answer, so it just bullshits an answer. There are lots and lots of similar queries where it happens that follow this formula.

It doesn't think at all, sure, so it can't actually "use" anything, but I guess we don't need to get into the details here. Humans are fine with saying "legs" of an table even if does have just supports.

Schrödinger's gay.

Perhaps ChatGPT could preface all it's answers with - "I gathered these responses from what other people, some knowledgeable and others without a clue, have said but I have no idea if it is true or not".

🐦🔥nemo™🐦⬛ 🇺🇦🍉

🐦🔥nemo™🐦⬛ 🇺🇦🍉