@loucyx @jafo @GossiTheDog it's also not even correct, so what you've managed to get there is a different wrong answer.

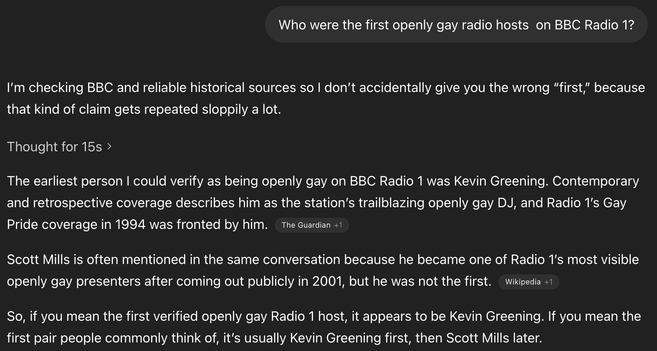

If you think 'confidentaly incorrect' is an improvement over 'obvious gibberish', then yeah, I suppose this is preferable, but it doesn't get you any closer to the truth.

(personally I think 'obviously wrong' is preferable, because then at least you know to ignore it.)

@jafo @loucyx @GossiTheDog but your mileage should not vary. that's the point.

getting a different answer each time is what makes these tools not fit for purpose. if they return the right answer some of the time but you never know which times, what's the point in them?

@benjamineskola @jafo @GossiTheDog 100% this! If they were always right or always wrong it would be one thing, but the only constant is that they are always confident about their answer (either if it’s right or wrong) which is what makes them dangerously unreliable.

And this isn’t even getting into the whole detrimental effect they have on cognitive analysis and reasoning for LLM consumers.