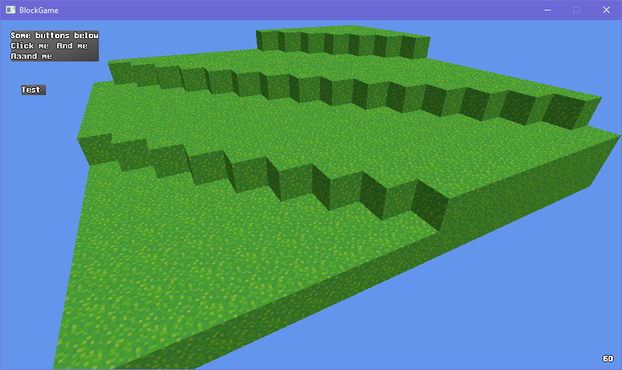

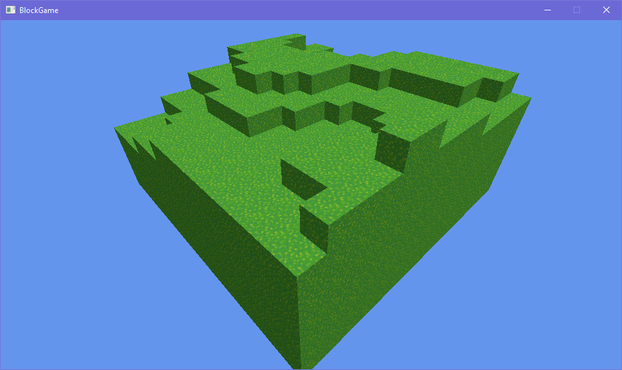

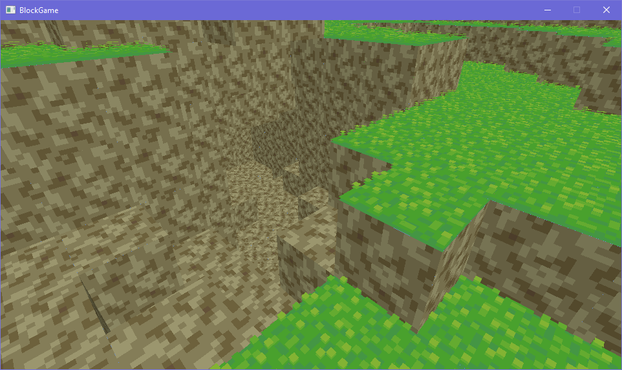

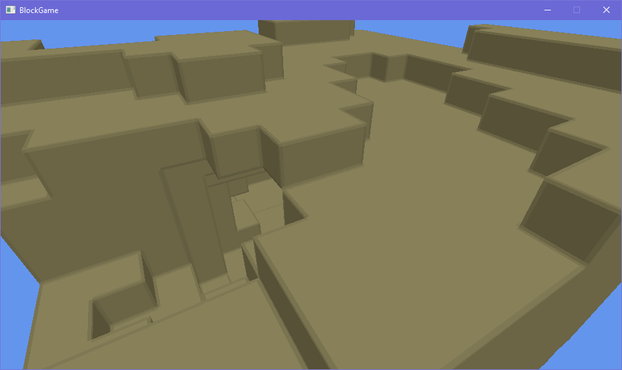

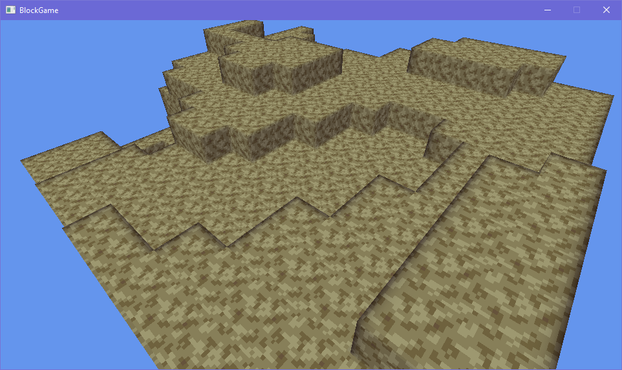

ok one more before i head to bed just to show that it can do more interesting things than just large rectangles made up of smaller cubes

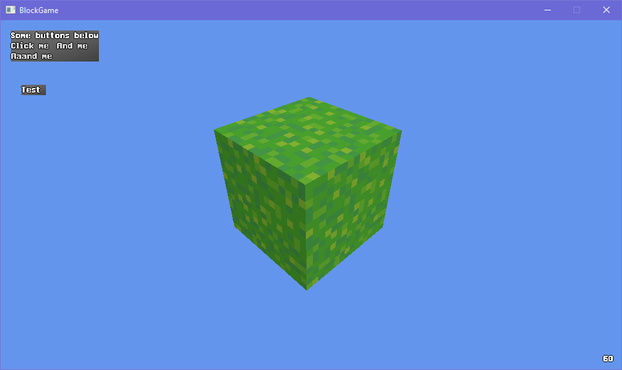

okay so this may seem a little unorthodox but bear with me here

what if

shell texturing in block game

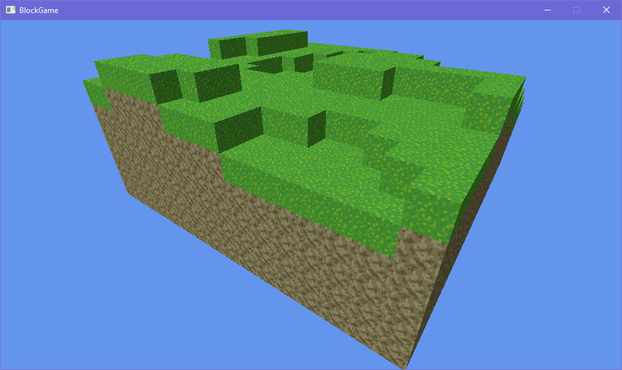

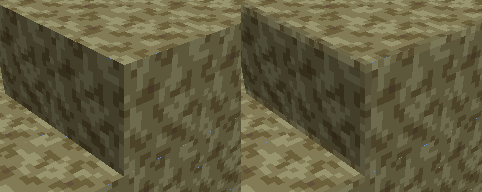

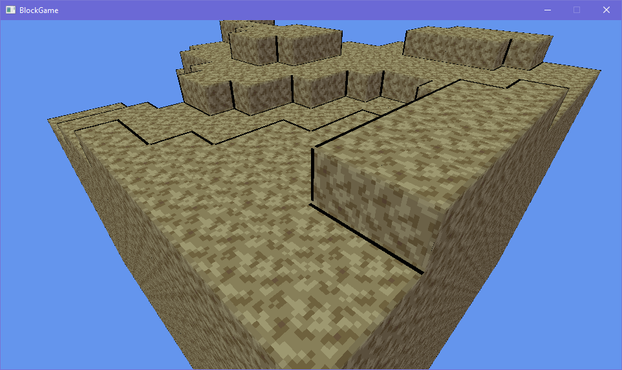

after endless futzing about and tweaking i think i have something that may just be workable for this faux bevel effect i want

(note that there's a "bevel" between adjacent blocks cause i haven't made the effect dependent on the block's neighbors yet)

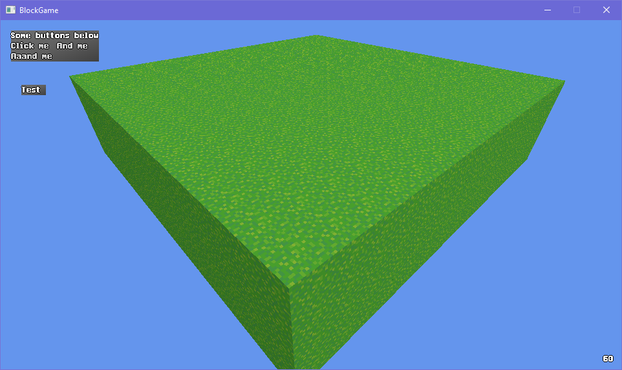

I dunno I'm just not feeling the actual lighting model. Its not like minecraft exactly has realistic lighting anyway: blocks are lit from two opposite sides!

But the problem with baked lighting is if you have non symmetrical blocks you have to make 4 versions of each face to get all the lighting. Not that that's super onerous

Also if I made something like a lectern that would be a lot of work to do manually for each orientation

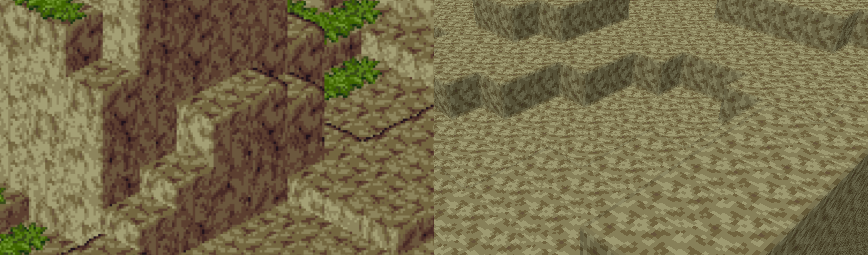

ok i figured out a reasonable way to fudge the normals so i won't have to manually bake lighting. basically what i do is set the normals of the edges so that they point towards where the adjacent faces are pointing (90 degree bend) and then i lerp from the face's lighting factor to the neighbor's lighting factor

that gives me the result on the left. i tried just having regular, actual normals, but it creates artifacts like on the right (its accurate that it shades it like that but it looks bad)

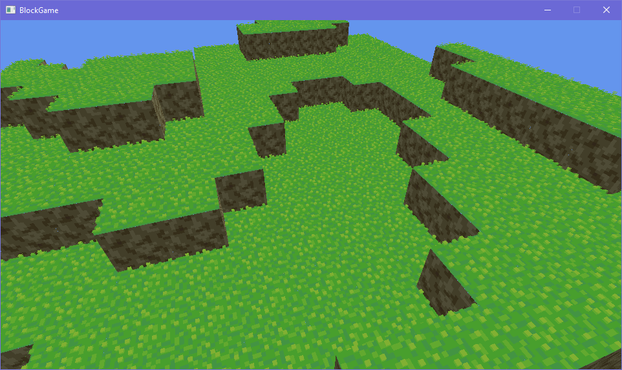

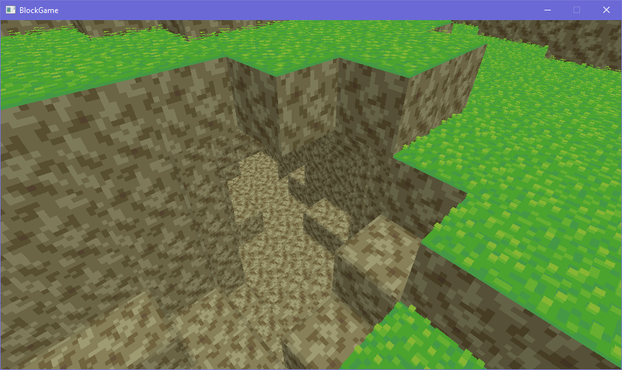

i've brought back the grass, which is actually an obj model

see i made an engine before and appropriated it for this project. it could load obj models (with normal maps) so at first the blocks in my world were actually loaded from obj models

that's why i had to redo a buncha stuff to add the bevelling, since i had to generate the faces myself

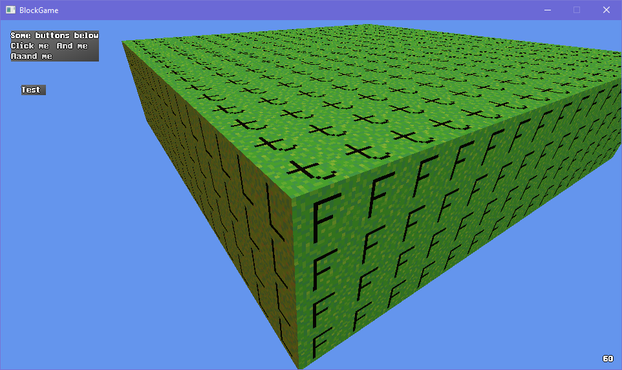

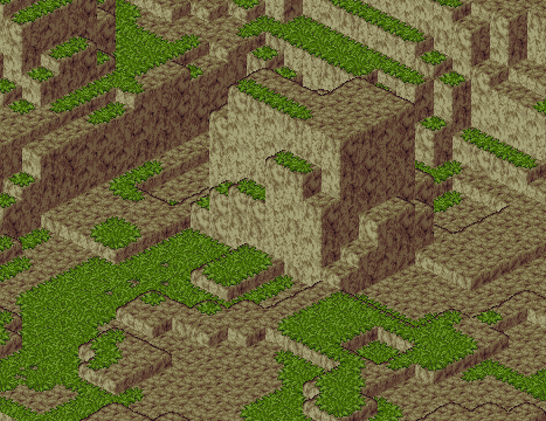

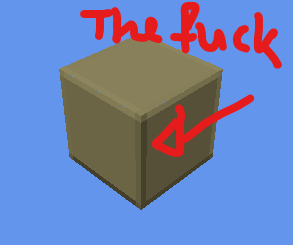

had a silly idea for block outlines using the bevel system. i take the dot product of the vector from the camera to the world position with the normal to determine if the bevel normal is pointing away from the camera, then add an outline based on that. there's some outlines where they don't belong but i can get easily rid of those

... i don't hate it? 🤔

lol the outlining has more than doubled the code in my fragment shader

texturing, lighting, and bevelling: 12 lines of code

just doing outlines: 19 lines of code

On the other hand I guess it would be fair to say these graphics are looking a little...... muddy

Eh? Eh? Eh? :D

i'm commander shepherd and this is my favorite spot on the citadel

(seriously i cannot get enough of looking at this particular subsection of a screenshot i took of the outlines in my voxel engine)

debating what i wanna do with grass in my engine. atm i have a block shader, and a model shader. i feel like i could efficiently implement shell textured grass by making a shell texturing shader, but i also don't want to balloon the number of different shaders i need to render all my terrain 🤔

i could just use the model shader, but it becomes a pain in the ass to implement shell textured terrain then

i could try and combine it with the block shader but they both do really different things

hmm if i want to add bits to the shell texturing so it doesn't look bad viewed side-on i kinda have to go the model route

though i figured out i can get away with only 6 variations to get all the edge transitions 🤔 that could be doable if a bit annoying

i'm overthinking the grass thing so i've made the textures required to make the shells for a 16-tile transition and i'll just generate the model for each of those 16 and implement them in the engine and move on so i don't wind up blocked by choice paralysis

if i want i can always just change it later

@eniko Maybe you could inset or extrude each texel by height map in a geometry shader? Then you could also dial it back over distance.

Caveat:

I dunno if this is possible, still haven't gotten around to ever writing a geometry shader.

Also overlapping insets and extrusions would be a problem.

@eniko @ruby0x1 @Farbs Because they can change geometry type and count on the fly, GPUs have to do a ton of silicon gymnastics to supports them, and performance can differ a ton on different hardware.

Afaik the modern advice is to either somehow fit your needs to the standard pipeline (vertex+fragment), or make a specialized compute shader, - in both cases it would usually be faster then a geometry shader.

@lisyarus @eniko @Farbs yea there's an older post here that had some of it http://www.joshbarczak.com/blog/?p=667

but modern platforms don't even all support geometry shaders (notably metal), they're a relic of a weird time and typically avoided. with mesh shaders being the modern replacement, and compute being able to do it (with indirect rendering) you wouldn't reach for geo shaders

@eniko Having 3 shaders still doesn't sound like a lot tbh? Especially if you know you want a specific effect and it doesn't fit well within existing shaders.

Maybe you'll find more usages for this shader later as well!

@eniko Render it out from a bunch of different angles protected back into a flat texture and then just snap between them 😈

Or...

Draw a specific, unique value into the alpha channel for grass so a post effect can find it later and do something entirely bizarre involving adjacent-ish pixels.

@eniko it's very voxely.

(Seriously: I totally get satisfaction with your own work)

@eniko Oh wait I hadn't seen the rest of the thread

Block Game by Eniko is good too!

I love the outlines, makes the terrain visually clear. That's very nice.

Why Not Zoidberg? 🦑

Why Not Zoidberg? 🦑