This profile of me in *The New Yorker* came out really well, if I do say so myself:

Almost 20 years ago I worked at a company doing medical coding (billing) using an early version of AI.

We automated what had been a manual process and saved hospitals a lot of money.

We had both an "assistant" product and fully automated product.

It was well known that we made mistakes. Humans did too, transcription mistakes, judgment mistakes- it happened.

We didn't have to be more accurate than humans, we just had to save the hospital more than the difference.

@cstross @tolortslubor @pluralistic

I've seen less of this clip than I should have

RoboCop: Director's Cut | REMASTERED - ED-209 Malfunction Scene (1080p)

crappy ai will not only replace labor, it will replace capable ai

a capable self-driver costs billions and decades, and the ip is closely guarded

scrappy upstarts will tweak free shit from github and call it good enough

@pluralistic @ares This is of course an argument for strong state regulators with venom glands and fangs, never mind teeth, to stop this sort of future from coming about.

Unfettered capitalism will destroy the human species, never mind the planet.

"Computer says 'no'" has been the rule of some companies for years. This just extends the problem.

@gsoc @pluralistic yeah but I don't think it's a problem with AI itself, I think it's a problem with people using it in areas where it just shouldn't be used yet

But I don't want to bash the technology and the development of it because people are doing dumb things with it

what if animal and human intelligence is also just a "statistics-guided algorithm"

@pluralistic

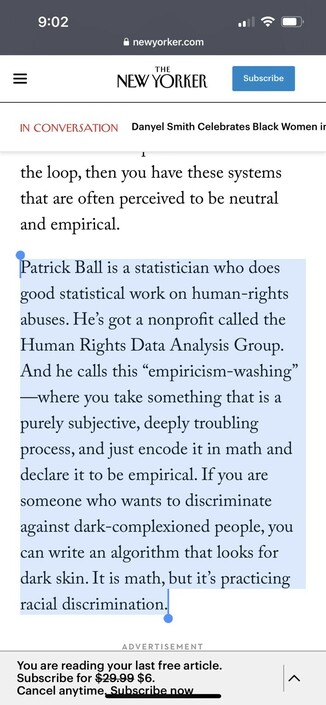

This is a fantastic interview.

"This is why merger scrutiny is such a big deal, because these companies are not built by super geniuses who use their access to the capital markets to build these impregnable businesses which no one else can assail. They are regular, venal mediocrities who use their access to the capital markets to buy everyone who might threaten them. If there’s merger scrutiny, that just stops happening."

'Venal mediocrities' is going into my autocomplete file.

@kims @pluralistic Oof my non-native comprehension took `venal` as a typo for `venial`.

Important difference learned https://grammarist.com/usage/venal-vs-venial/#:~:text=Venal%20comes%20into%20the%20English,transgression%20that%20is%20easily%20forgiven.today

Re the screenshot about Google:

Linspire CNR Click-n-Run &

Xandros Linux had webstores for software long before Google Play and Apple store. Never been able to see how the intellectual property worked out.

@pluralistic @cainmark

I believe we should differentiate between a company acquiring a competitor to silence it and one acquiring a business that is bringing new product line to its portfolio.

Google bought Android and invested a huge amount of money over the years to make it a worthwhile IOS competitor. Without Google's clout I doubt very much Android would have accounted for much. The same could be said of YouTube.

The points you brought up in the interview are a multidisciplinary consideration of how human/AI interactions might be/come in a universe with better settings for a longevity of a civilization livabled by the Many rather than the Very Few. The categories of AI problems (intrinsic vs user-linked) are illustrated in the recent episode at the ISEC building of Northeastern U. (though the word "IRB" is well-known to bring fear even to hardened MBAs).

@pluralistic in the anime "Pyscho Pass", the police all carry weapons that have AI that tell the cop when to aim and fire, and the AI determines the amount of lethality dispensed. This gives the cop plausible deniability: they were just following orders. But it also does the same for the AI: humans are the buffer in between.

I think this is exactly what's happening with many of the scenarios you describe here.

@borisbuilds @pluralistic This is exactly the reasoning McDonnell Douglas used to bypass acceptance testing and source code access to dozens of embedded programs in the F-15, 40-60 years ago. “It’s firmware.”

I don’t know if the USAF, in particular Warner-Robins Air Logistics Center, ever got access to that code. Engineering Change Proposal 339, which detailed every processor we *knew about*, was still in limbo when I left the USAF in 1989.

@pluralistic I love this, and also so much of your work.

But I'm also wary of life-hacking and the (late-capitalist) traps of productivity. You can do it (everything), yay! Some people really can just spit out thousands of words and have a blog and be an activist and have a kid!

Not everyone can, though. Or not methodically. I get lost on my way to my own kitchen.

See e.g. Anna Hogeland's essay on the rewards of procrastination: https://lithub.com/anna-hogeland-on-the-rewards-of-procrastination/

@pluralistic Ha! Thanks for reminding me that an alternate name for a cell phone or tablet is "distraction rectangle"

https://www.urbandictionary.com/define.php?term=Distraction%20Rectangle

@woozle Excellent bit at the end of Doctorow's New Yorker profile on content moderation:

I worry that, because of the attacker’s advantage, the people who want to break the rules are always going to be able to find ways around them, and that we’re never going to be able to make a set of rules that is comprehensive enough to forestall bad conduct. We see this all the time, right? Facebook comes up with a rule that says you can’t use racial slurs, and then racists figure out euphemisms for racial slurs. They figure out how to walk right up to the line of what’s a racial slur without being a racial slur, according to the rule book. And they can probe the defenses. They can try a bunch of different euphemisms in their alt accounts; they can see which ones get banned or blocked, and then they can pick one that they think is moderator-proof.

Meanwhile, if you’re just some normie who’s having racist invective thrown at you, you’re not doing these systematic probes—you’re just trying to live your life. And they’re sitting there trying to goad you into going over the line. And as soon as you go over the line they know chapter and verse. They know exactly what rule you’ve broken, and they complain to the mods and get you kicked off. And so you end up with committed professional trolls having the run of social media and their targets being the ones who get the brunt of bad moderation calls. Because dealing with moderation, like dealing with any system of civil justice, is a skilled, context-heavy profession. Basically, you have to be a lawyer. And, if you’re just a dude who’s trying to talk to your friends on social media, you always lose.

I think Doctorow's touching on a universal truth: that any rules-based system ultimately ends up being a sort of barristered hell. It's why content moderation is so damned context-sensitive. And also why and how extremists on both sides of a divide can drive out moderates and give rise to a highly-partisan shriekfest. Closely related to SSC's "Toxoplasma of Rage":

https://slatestarcodex.com/2014/12/17/the-toxoplasma-of-rage/

#CoryDoctorow #NewYorker #ContentModeration #Lawyering #ToxoplasmaOfRage