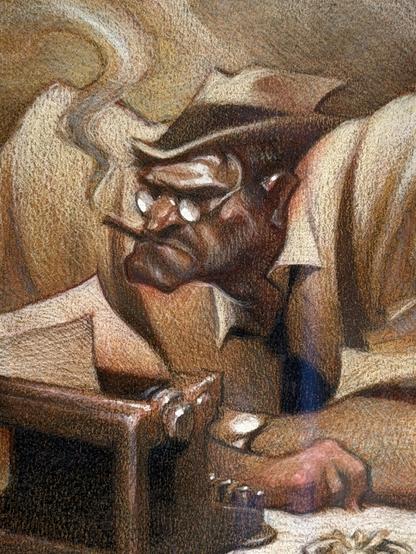

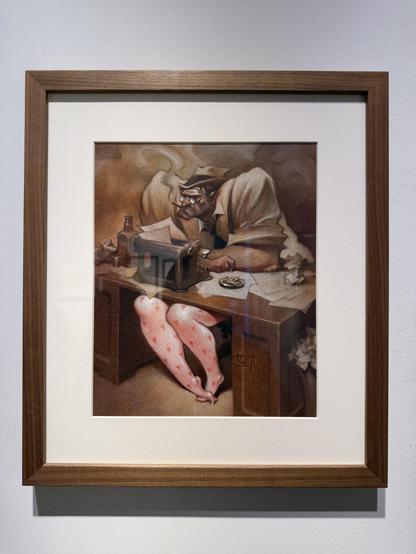

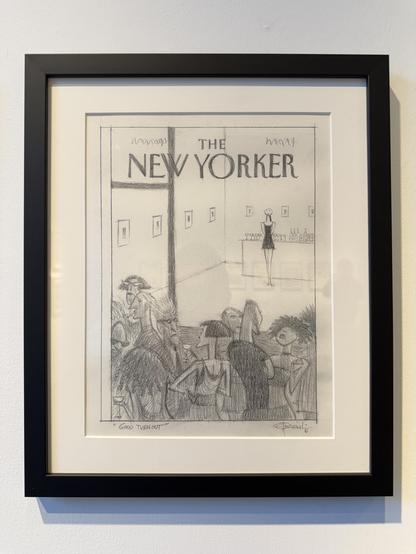

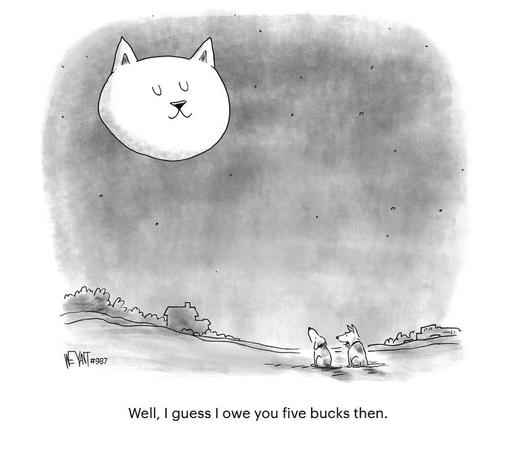

Another "can't miss" entry

#CarterGoodrich #NewYorker #Illustration

Cool Carter Goodrich exhibition at the Philippe Labaune Gallery in NYC

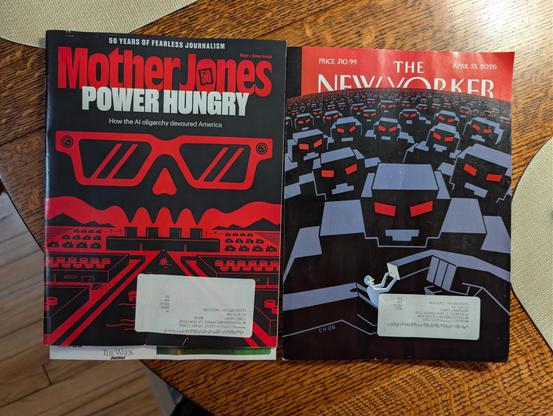

However, like many Farrow and Marantz seem to take the so-called "existential risk" framing of AI seriously. I really wish people would stop doing that. In this case it makes the article feel incoherent in places.

This technology by itself does not pose a unique risk. It's the people, organizations, and governments around it, and their behavior with respect to it, that generate risk. Treating the technology alone as uniquely existentially risky provides cover for a wide variety of bad actors to both continue doing their work as well as to shrug and say "oops" if something goes catastrophically wrong or if smaller harms accumulate into intolerably large ones. The very framing provides an accountability shield, which by my read contradicts what Farrow himself suggests is needed, namely more accountability. I take this from this article, his previous work, and comments he makes in interviews (e.g., this one with Decoder.

We need to stop catastrophizing. It's thought and action terminating.

#AI #GenAI #GenerativeAI #OpenAI #SamAltman #RonanFarrow #AndrewMarantz #NewYorker #xrisk #ExistentialRisk #AISafety

Ronan Farrow on Sam Altman's strained relationship with the truth | Decoder

May be I am missing something, but is AGI so close and so real?!

#news #newyorker #ai #agi

@eff 🤝 HOPE: Join Us This August!

Protecting #privacy and #freespeech online takes more than policy work—it takes community. Conferences like #HOPE are where that community comes together to learn, connect, and push these ideals forward. That's why EFF is proud to be at #HOPE26.

Join us at this year's #Hackers On Planet Earth, August 14-16 at the #NewYorker Hotel in #Manhattan !

#newyork #newyorkcity

https://www.eff.org/deeplinks/2026/04/eff-hope-join-us-august

EFF 🤝 HOPE: Join Us This August!

Protecting privacy and free speech online takes more than policy work—it takes community. Conferences like HOPE are where that community comes together to learn, connect, and push these ideals forward. That's why EFF is proud to be at HOPE 26.Join us at this year's Hackers On Planet Earth, August...

il manifesto: Una Roma tra caos e calore umano

Sono passati quarant’anni da quando Susan Levenstein, giovane newyorchese da poco laureata in medicina, è approdata in Italia per un’avventura che sarebbe dovuta durare qualche mese e che ancora continua. […]

The post Una Roma tra caos e calore umano first appeared on il manifesto.

A Rome of chaos and human warmth

Forty years have passed since Susan Levenstein, a young New Yorker who had recently graduated in medicine, arrived in Italy for an adventure that was supposed to last a few months and that continues to this day. […]

Post: A Rome between chaos and human warmth first appeared on il manifesto.

#SusanLevenstein #NewYorker #Italy #first

https://ilmanifesto.it/odissee-burocratiche-e-una-roma-tra-caos-e-calore-umano

Una Roma tra caos e calore umano | il manifesto

(Cultura) Sono passati quarant’anni da quando Susan Levenstein, giovane newyorchese da poco laureata in medicina, è approdata in Italia per un’avventura che sarebbe dovuta durare qualche mese e che ancora continua. Da questa anomalia biografica nasce Dottoressa. Un medico americano a Roma (traduzione di Katia Bagnoli, Besa Muci, pp. 158, euro 17), che ne porta il