Brussels and the Ballot Box: New Study Denounces EU Election Influence

Super Veranstaltung gestern Abend von @mkz und der @slpb, nämlich der #DigitalFightClub, diesmal zum Thema #TrustedFlaggers und Content Moderation. Spannender Einblick von Jessica Flint und Chan-jo Jun und aktive Diskussion.

Ich verstehe leider nicht, warum da so wenige anwesend sind, das sind alles dringende und wichtige Themen, die eigentlich wirklich jeden betreffen.

Time for action! 💪🏾

Today, we filed a formal #DSA complaint against X in Ireland, together with our Romanian member @apti.

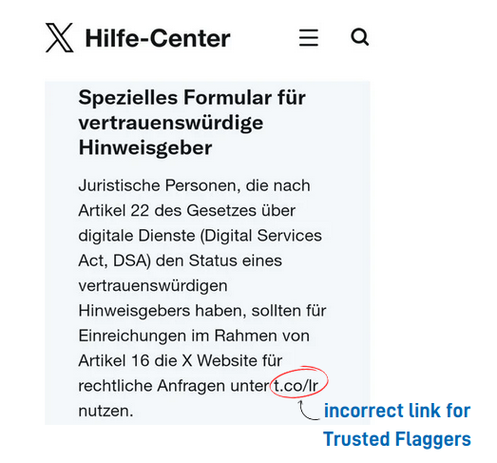

We show how X misleads #TrustedFlaggers to use a wrong, non-functional online form in all EU languages but English.

Reminder: A few weeks ago we asked #X to rectify their faulty online forms, but while they thanked us for the info they didn't actually do anything about it 🤷🏾♀️

Read more here ⤵️ https://edri.org/our-work/edri-files-dsa-legal-complaint-against-x/

🗓️ One year after entry into force of the #DigitalServicesAct, #Musk's platform #X is still not complying with the law.

Together with @apti we alerted X that its 'Help' pages in most EU languages except English are misleading. They're sending #TrustedFlaggers who want to notify infringing content to a submission form that does not work.

Means to flag infringing content is mandatory under the #DSA. We have asked X to correct its pages by March 3, 2025 and will keep you posted on their response ⌛

Kann mir jemand sagen, warum wir eine Gesinnungspolizei bzw. Stasi brauchen? Haben wir damit nicht schon mal schlechte Erfahrungen gemacht?

Es gibt doch ganz klare Straftatbestände, wenn Leute im Netz über die Stränge schlagen. Wozu brauchen wir eine Zensur der Meinungsfreiheit? Es kann mir ja nicht mal jemand "Hassrede" definieren.

Was ich wahrnehme ist: Hassrede ist alles, was unserer Meinung widerspricht, egal wie sachlich die Kritik geäußert wird.

https://digital-strategy.ec.europa.eu/en/policies/trusted-flaggers-under-dsa

#EU #DSA #SocialMedia #TrustedFlaggers #ContentModeration: "One of the most-publicized innovations brought about by the Digital Services Act (DSA or Regulation) is the ‘institutionalization’ of a regime emerged and consolidated for a decade already through voluntary programs introduced by the major online platforms: trusted flaggers. This blogpost provides an overview of the relevant provisions, procedures, and actors. It argues that, ultimately, the DSA’s much-hailed trusted flagger regime is unlikely to have groundbreaking effects on content moderation in Europe."

So, is this sort of an uninstitutionalized kind of #trustedFlaggers ?

Open letter: EU countries should say no to the CSAR mass surveillance proposal - European Digital Rights (EDRi)

Today, EDRi and 81 organisations have sent an open letter to EU governments to once again urge them to say no to the CSA Regulation until it fully protects online rights, freedoms, and security.

This legislation mandates “trusted flaggers” to identify certain kinds of problematic content to platforms, who must then remove it within 24 hours.

Our results show this approach can indeed reduce the spread of harmful content. We also suggest some insights into how the rules can be implemented in the most effective way.

https://theconversation.com/can-human-moderators-ever-really-rein-in-harmful-online-content-new-research-says-yes-209882 #harmful #content #TrustedFlaggers #platforms

Hey Law & Society, saw that video:

https://www.youtube.com/watch?v=CG9pRG6cPAE

and listened to this episode of the @lawfare podcast:

https://www.lawfaremedia.org/article/lawfare-podcast-pay-attention-europes-digital-services-act

on how to regulate #LLM like #ChatGPT etc.

To me, the idea of #TrustedFlaggers seems really interesting as it includes #watchdogs and #CivilSociety into the #Commission |s formal system of #ChecksAndBalances

Is this as extraordinary as it seems to me?

@law @sociology @SandraWachter #lawandsociety #dsa #sociology