Как использовать Claude Code в 8 раз дешевле: подключаем китайские модели

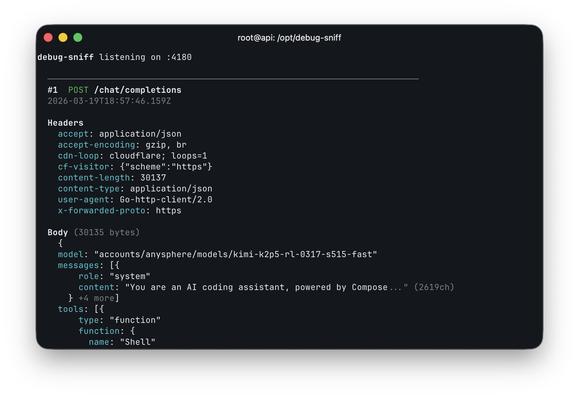

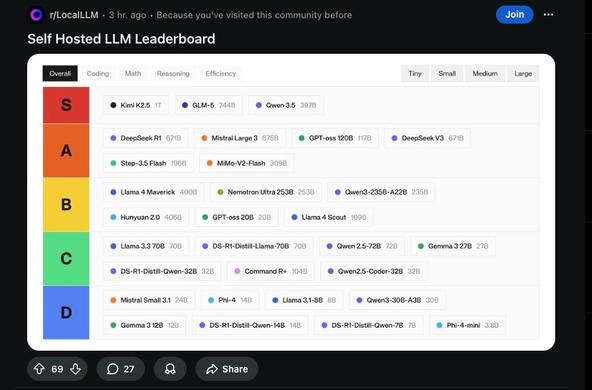

Всем привет! Сегодня разберём, можно ли использовать Claude Code с китайскими моделями вместо Opus и сколько на этом реально сэкономить. Взял две китайские модели Kimi K2.6 от Moonshot AI и GLM-5.1 от Z AI и прогнал их на привычных задачах и сравнил с Opus. Не в написании кода, а на повседневных задачах: создать лендинг, сделать карусель для соцсетей, анализ данных, что-то в интернете поискать сравнить, написать какой-нибудь не сложный Telegram-бот и т.д. Забегая вперёд: результаты оказались неожиданными. Где-то китайские модели выиграли у Opus, где-то проиграли, а где-то разница оказалась чисто вкусовой. В конце статьи будет пошаговая инструкция, как подключить любую из этих моделей к Claude Code за пять минут. Работает и в терминале, и в десктоп-приложении, и в VS Code, везде где вы привыкли запускать Claude Code

https://habr.com/ru/articles/1026760/

#claude #claude_code #kimik2 #kimik25 #openai #ai #ии_агенты