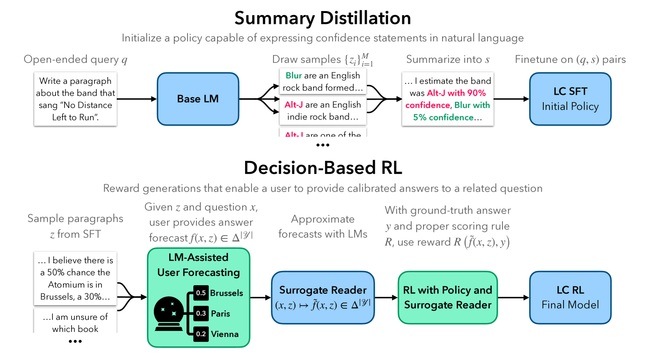

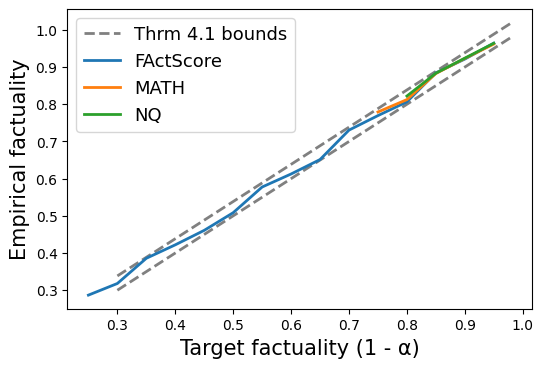

Linguistic Calibration trains Llama 2 to emit confidence phrases that let a downstream reader make calibrated forecasts on related questions. The key move is defining calibration through reader utility instead of self-reported probability. Hedged text that doesn't help the reader makes no forecasting progress, so generic hedging can't game the objective.

https://benjaminhan.net/posts/20260505-linguistic-calibration/?utm_source=mastodon&utm_medium=social