Apple rozwija technikę, która pozwala AI lepiej naśladować styl pisania użytkownika

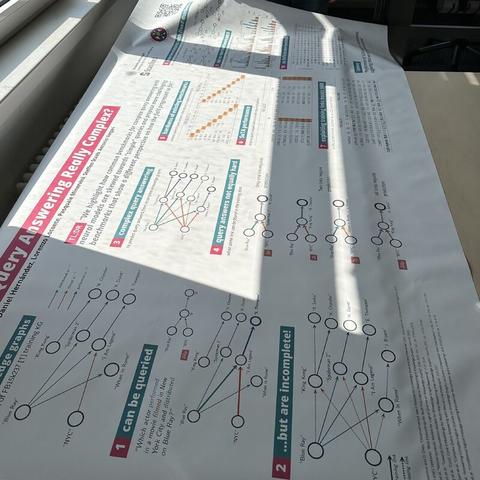

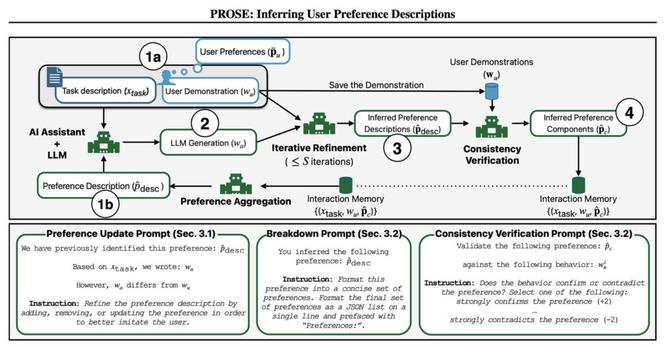

Apple zaprezentowało nową metodę personalizacji modeli językowych o nazwie PROSE (Preference Reasoning by Observing and Synthesizing Examples), która ma na celu poprawę dopasowania stylu pisania AI do indywidualnych preferencji użytkownika.

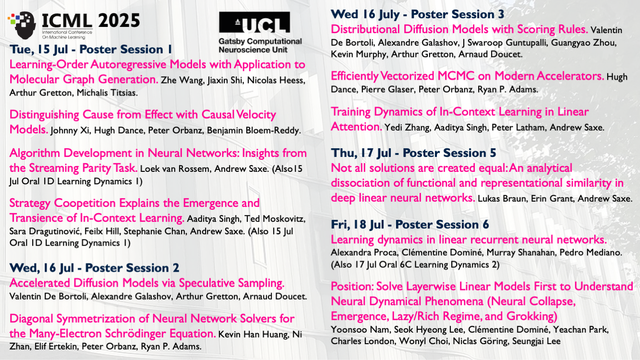

Prace zostaną przedstawione podczas konferencji ICML 2025. Jak działa PROSE?

Zamiast polegać wyłącznie na promptach czy ręcznej edycji, PROSE:

- analizuje próbki tekstów użytkownika (np. e-maile, notatki),

- tworzy wewnętrzny profil preferencji pisarskich (np. „krótkie zdania”, „ironia na początku”),

- iteracyjnie porównuje własne odpowiedzi z oryginałami i dostosowuje się,

- sprawdza spójność stylu na większej liczbie próbek, by nie opierać się na pojedynczych przykładach.

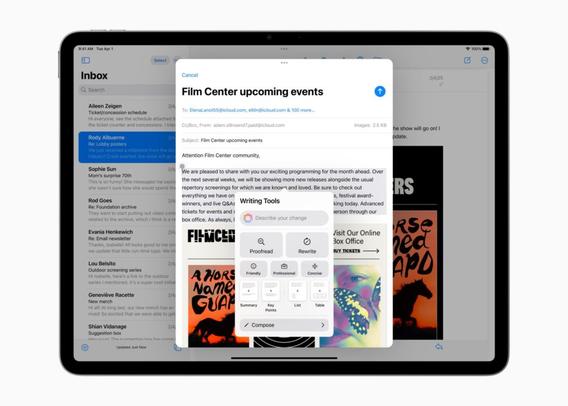

Choć badanie nie wspomina bezpośrednio o produktach Apple, PROSE idealnie wpisuje się w strategię Apple Intelligence – systemu inteligentnych funkcji, które mają być bardziej spersonalizowane i działać lokalnie na urządzeniu. Technika ta może w przyszłości trafić do aplikacji dzięki Frameworkowi Foundation Models, umożliwiającemu dostęp do AI na poziomie systemowym.

Nowy benchmark: PLUME

Apple opracowało także PLUME – zbiór danych do testowania jakości dopasowania stylu, oparty na autentycznych e-mailach i notatkach. PROSE pokonało wcześniejsze metody, np. CIPHER, aż o 33%, a w połączeniu z klasycznym „in-context learning” (ICL) zyskało dodatkowo 9% skuteczności.

PROSE wpisuje się w coraz silniejszy nurt personalizacji AI – od dopasowywania preferencji po uczenie kontekstu. To nie tylko kwestia użyteczności, ale też strategii utrzymania użytkownika w ekosystemie – im lepiej AI zna Twój styl, tym trudniej będzie Ci z niej zrezygnować.

#AppleAI #AppleIntelligence #ChatGPTVsApple #FoundationModelsApple #ICML2025 #personalizacjaAI #PLUMEApple #PROSE #przyszłośćAIWIPhone #stylPisaniaAI