Embarrassing.

My animal rights library hallucinated by an AI (Pro version).

Can you guess the actual titles and authors?

How do we make LLM output more trustworthy? A short survey note on three lines of recent work covering five papers: conformal-prediction coverage guarantees, behavioral calibration of the model's prose, and sample-disagreement detection. All three pay the same multi-sample inference tax; the choice is about what you want back.

https://benjaminhan.net/posts/20260505-llm-uncertainty-survey/?utm_source=mastodon&utm_medium=social

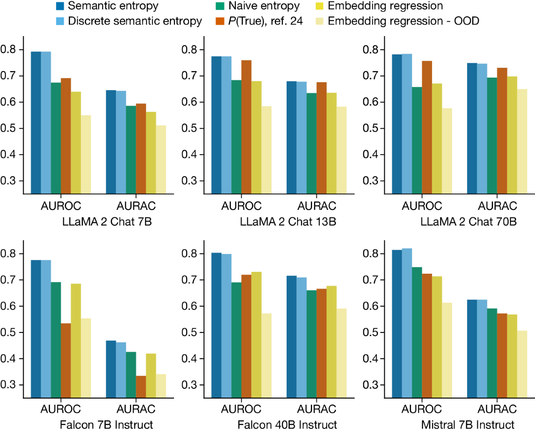

Semantic Entropy (Nature 2024) detects LLM confabulations by clustering sampled answers by meaning and computing entropy over the cluster distribution. "Paris" and "It's Paris" cluster together, so paraphrase noise doesn't inflate the signal. Cost: it only catches hallucinations that vary across samples. If the model is consistently wrong, all samples cluster and the detector says "confident".

https://benjaminhan.net/posts/20260505-semantic-entropy/?utm_source=mastodon&utm_medium=social

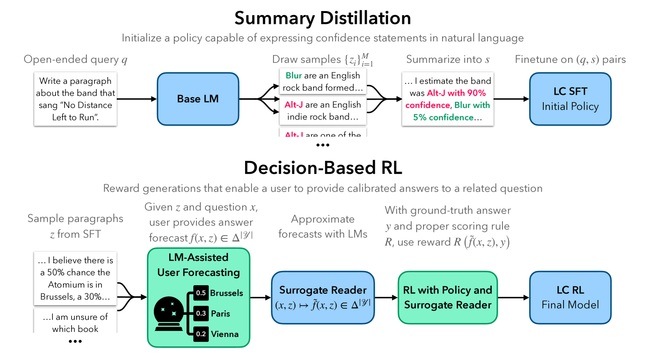

Linguistic Calibration trains Llama 2 to emit confidence phrases that let a downstream reader make calibrated forecasts on related questions. The key move is defining calibration through reader utility instead of self-reported probability. Hedged text that doesn't help the reader makes no forecasting progress, so generic hedging can't game the objective.

https://benjaminhan.net/posts/20260505-linguistic-calibration/?utm_source=mastodon&utm_medium=social

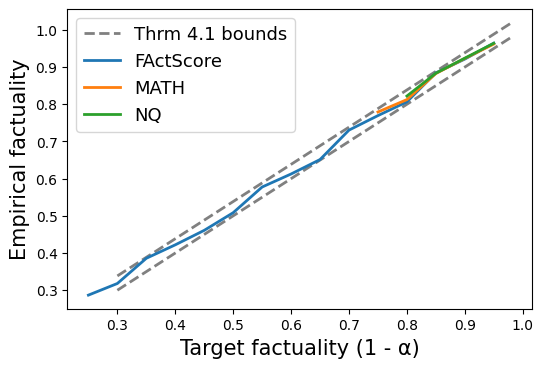

Conformal Factuality casts LM correctness as uncertainty quantification. Decompose the answer into sub-claims, score each, drop the low-confidence ones until the retained set is ~1-α factual. The sub-claim decomposition is doing most of the work, and the conformal machinery rides on top. Atomic-claim splitters have known failure modes, and the guarantee inherits them.

https://benjaminhan.net/posts/20260505-conformal-factuality/?utm_source=mastodon&utm_medium=social

#ConformalPrediction #Calibration #Hallucination #LLMs #ICML #AI

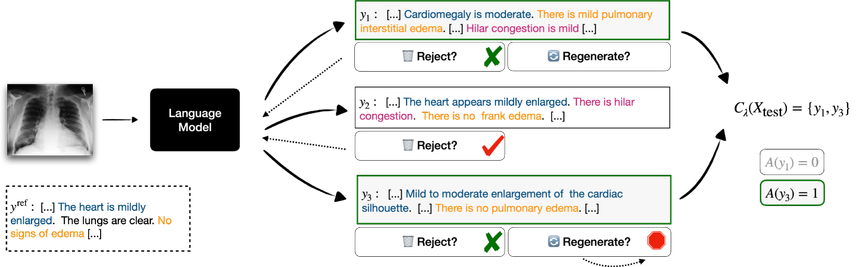

Conformal Language Modeling (CLM) adapts conformal prediction to generative LMs: sample candidates, stop when a calibrated rule fires, return a set guaranteed to contain an acceptable answer. The more interesting half is the component-level filter — per-phrase coverage, not just set-level. That's the primitive for hallucination flagging: highlight the vetted phrases, leave the rest for review.