#ScribesAndMakers 5/7. When did you think of the idea for your current project? Do you remember how it came about?

So we (my wife and I) are booked to trade at a steampunk event on June 6th/7th.

My wife said, late March this year, "the theme for the event is pirates and mermaids, we could write books". So I said "That's a good idea."

The file was opened March 25th and the writing completed at 30K words on May 4th. And I'm now working on the clean-ups.

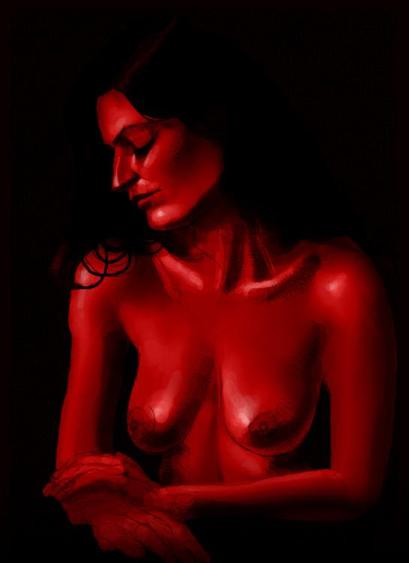

Coming soon: "The Damsel and the Mermaid"

The mermaid image is available as a wallpaper/screensaver for desktop, iPhone and Android

https://ko-fi.com/adaddinsane/shop

#writing #writingCommunity #sapphic #romantasy #3dRender #noAI #madeWithDaz #3dArt #CGI #art #fantasyRomance #wlw #humanMade #mermay