In Sekunden bestellt und gelie...

In Sekunden bestellt und gelie...

Современный дата-стек: потоковая система из «LEGO»

Вы слышали о Kafka , MQTT , S3 , Iceberg , Trino , PostgreSQL , Redis и Flink ? А насколько хорошо вы знаете эти технологии? По каждой из них написаны огромные книги («Kafka: The Definitive Guide», около 800 страниц), и каждый день выходят новые публикации про тонкости. Эта статья про другое. Вместо внутренностей движков и законов распределённых систем посмотрим на эти технологии как на кубики LEGO: какую роль каждая из них играет в архитектуре и как они стыкуются друг с другом. Это будет практический туториал: начнём с минимальной конфигурации и постепенно соберём сложную систему. Статью можно просто читать как обзор архитектуры, а можно запускать каждую конфигурацию и изучать её в деталях. Для этого достаточно Git , Git LFS и Docker Compose . Всё запускается в контейнерах. Даже примеры на Java собираются через Docker multi-stage build .

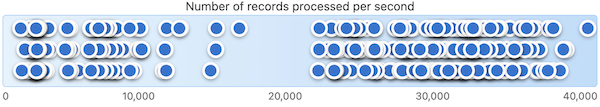

A few weeks ago, I needed to explain why it's difficult to say the number of CPU cores needed for #Flink to process 100k #Kafka events/sec (in the absence of any info about the data, the environment, the nature of the processing, etc.)

Two dozen graphs and one Doctor Who meme later, that turned into a blog post 😀

"How many Kafka events will Flink process per second?"

I'm often asked this. The specific question varies, but it's typically some variation of asking how quickly a single CPU of Flink processes events from a Kafka topic. Why "per CPU"? Maybe because enterprise software is typically charged per CPU? Maybe because I tend to talk to people who run ever

Volga: движок обработки real-time данных для AI/ML — аналог Spark и Flink на Rust (Arrow + DataFusion)

Volga — open-source движок обработки данных, созданный как альтернатива Apache Spark и Apache Flink и ориентированный на требования real-time AI/ML систем: консистентное вычисление фичей между online и offline режимами, point-in-time корректные агрегации, длинные скользящие окна, а также ML-ориентированные функции, такие как top- и категориальные агрегации. В статье рассматриваются мотивация и история разработки, архитектура системы и её ключевые компоненты, а также проводится сравнение с ML-ориентированными решениями (Chronon, OpenMLDB) и универсальными стриминговыми движками (Apache Flink, Apache Spark, Arroyo).

https://habr.com/ru/articles/1021290/

#rust #spark #flink #ai #ml #mlops #kubernetes #sql #python #streaming

Volga: движок обработки real-time данных для AI/ML — аналог Spark и Flink на Rust (Arrow + DataFusion)

TL;DR Volga — open-source движок обработки данных, созданный как альтернатива Apache Spark и Apache Flink и ориентированный на требования real-time AI/ML систем: консистентное вычисление фичей между...

Stop answering today’s questions with yesterday’s data! 📰

Join Sandon Jacobs at #ArcOfAI to learn a streams-first approach for low-latency RAG using Kafka & Flink, ensuring your AI always has fresh context.

https://www.arcofai.com/speaker/520a73e3ba72494192f204d90ab8b7a9

I've written up some #Flink thoughts : event-stream processing project don't have to be accessible SQL *or* powerful Java. UDFs let you work in SQL, and write Java to extend what SQL can do when you need.

I've come up with six examples of when it helps to extend Flink SQL with Java functions.

Extending Flink SQL

In this post, I’ll share examples of how writing user-defined functions (UDFs) extends what is possible using built-in Flink SQL functions alone. I'll share examples of how UDFs can: make complex SQL readable and maintainable add algorithmic logic that SQL cannot express reshape complex events in

I presented at our annual online #IBMTechCon event yesterday about Deploying an Apache #Flink job into production.

I gave a walk-through of what is involved taking an event processing flow, and deploying it into Kubernetes.

I tried to fit a lot in the hour!

Deploying Apache Flink jobs into Kubernetes

IBM TechCon is an annual online technical event for engineers, creators, and integration specialists. One of our sessions for this year was Deploying an Apache Flink job into production: You’ve maybe seen the low-code canvas in Event Processing or the simple expressiveness of Flink SQL, and how

creating #Kafka and #Flink demos for TechCon 2026 - our virtual event later this month

TechCon is about live and in-depth technical sessions on integration, automation, messaging, and event driven architectures - we can't get away with just slides! ;-)

https://www.ibm.com/events/reg/flow/ibm/l2ma0vmb/landing/page/landing

#Flink has largely replaced #Kafka Streams for me.

I do miss Streams... maybe I'm getting old and prone to nostalgia, or maybe I'm sad to move on because of the amount of time I spent learning Streams' quirks!

This is a better intro to how they compare than my gut gives :)

https://xebia.com/blog/making-right-choice-flink-or-kafka-streams/

🚀 Big Data meets AI—powered by Iceberg, Spark & LLMs

At #ArcOfAI, Pratik Patel shows how to build a real architecture that lets users query massive datasets with natural language—no dashboards, no SQL, just questions & insights.

https://www.arcofai.com/speaker/1c241471d7f04018a0da70efffd35b32

🎟️ Get tickets: https://arcofai.com

#ArtificialIntelligence #BigData #DataArchitecture #ApacheSpark #ApacheIceberg #LLM #GenAI #EventStreaming #Kafka #Flink #AIEngineering #TechLeadership