Smaller models. Lower bandwidth. Portable GPU execution.

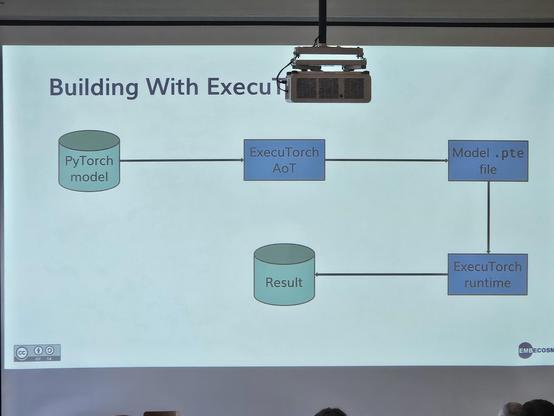

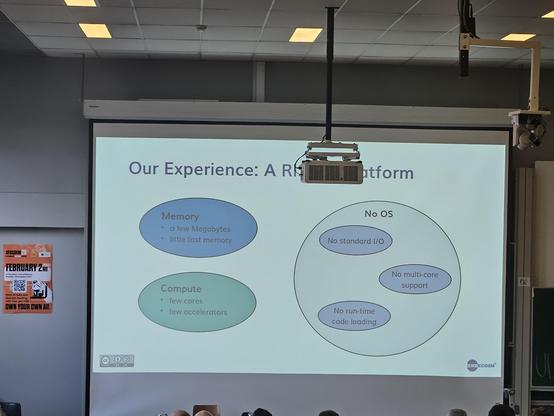

How BitNet-style ternary quantization is helping bring efficient LLM inference to ExecuTorch through its Vulkan backend, targeting edge devices where performance and memory matter.

Recap our work from PyTorch Conference Europe 2026: https://www.collabora.com/news-and-blog/blog/2026/04/17/bringing-bitnet-to-executorch-via-vulkan/