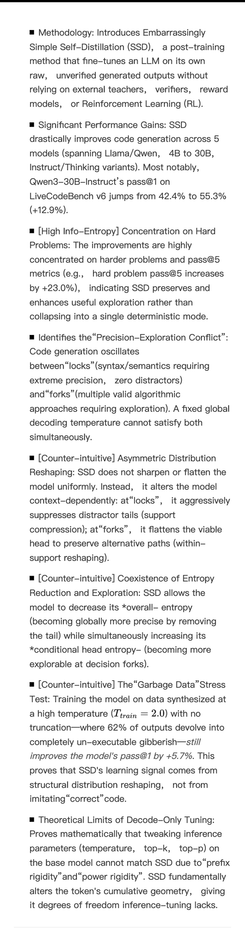

A study by Xue Jiang's group demonstrates that convergence in AI code generation is achieved through flexible natural language semantics rather than discrete logic.

The proposed method, using the < think> token to explicitly express complex sections, significantly improves benchmark performance.

https://arxiv.org/pdf/2603.29957

#ai #softwareengineering #codegeneration #aiperformance #llm