#AI #AIEthics #Tech #MachineLearning #AIHype #Society #FutureOfWork #Education #AIAgents #DigitalLiteracy #Innovation #Automation #AITools #CriticalThinking #TechIndustry #Chatbots

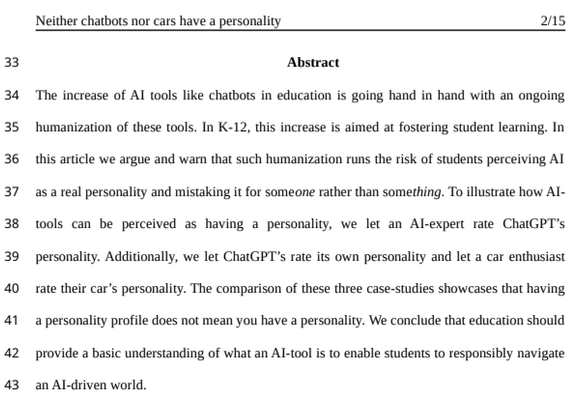

https://the-14.com/in-a-sea-of-hype-here-are-the-ai-nothingburgers-you-dont-hear-about/