"In a recent essay, Derek Thompson engages with AI as Normal Technology (AINT). He agrees with our thesis about AI’s slow labor market impacts, relying on the fact that GDP growth has so far been average, unemployment is below five percent, and even jobs that seemed vulnerable to automation show rising employment and wages. He concludes that so far, the macroeconomic picture is consistent with what we would expect from a “normal” general-purpose technology.

But when it comes to AI risks, he is far more bearish. He points to examples of cyber- and bio-risks and expresses pessimism about AI quickly becoming dangerous across many new domains. (...) Thompson writes: "I can understand a plan to treat AI as a ‘normal’ technology and let Nvidia export powerful chips to China. And I can understand a plan to treat AI as an ‘abnormal’ technology that compels the government to create extraordinary regulations that prevent private companies from selling their products and services on the grounds that they’re too dangerous" [emphasis ours]. He goes on to conclude that AI is, in fact, abnormal, implying support for extraordinary government intervention. Our essay is a response to that conclusion.

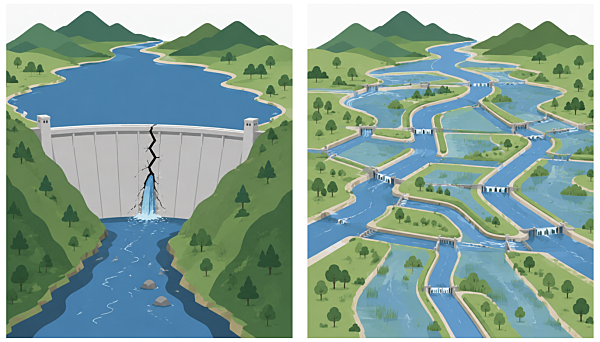

In this essay, we lay out the downsides of extraordinary government intervention in response to new technology. We discuss proposals for improving resilience that do not require such intervention. We also discuss why governments have so far been reluctant to invest in resilience. In short, resilience requires us to get better at the *normal* process of policymaking. But sclerosis in the federal government and the ease of justifying interventions on AI companies rather than society at large make extraordinary intervention seem appealing, despite its limitations."

https://knightcolumbia.org/blog/do-ai-risks-require-extraordinary-government-intervention

#AI #AISafety #AINT #NormalTechnology #AIRisk #AIRegulation