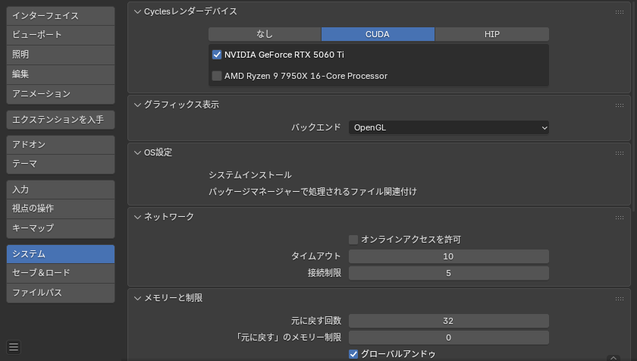

I benchmarked LLM performance on AMD Radeon AI PRO R9700 with Ollama, comparing ROCm 7.1 vs 6.4 across 8 models (Mistral, Llama, Qwen, GPT-OSS, DeepSeek, and more). Result: visible gains in prompt throughput (+87% avg) and faster responses (+11% avg). Full tables, setup, and notes in the post.

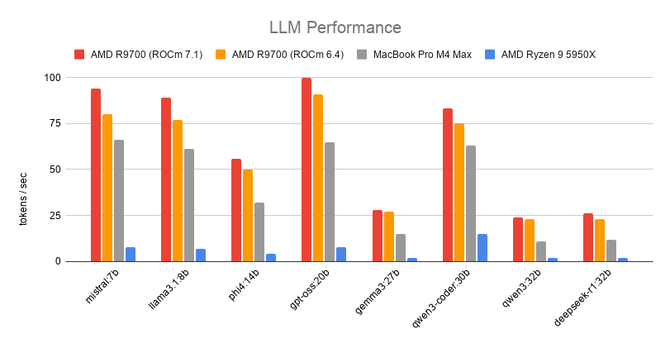

AMD ROCm 7.2.4 Released With Performance & Stability Fixes

AMD ROCm 7.2.4 Released With Performance & Stability Fixes #rocm #amd #videocard #aiAMD ROCm 7.2.4 Released With Performance & Stability Fixes

AMD ROCm 7.2.4 Released With Performance & Stability Fixes #rocm #amd #videocard #aiAnyone had success running Distrobox (Debian/Ubuntu vm) under Alpine Linux to get Blender with Cycles/HIP support working on an Alpine host? Something I gotta try...

#Linux #Alpine #AlpineLinux #Blender #ROCm #AMD #HIP #Distrobox #Podman #Docker

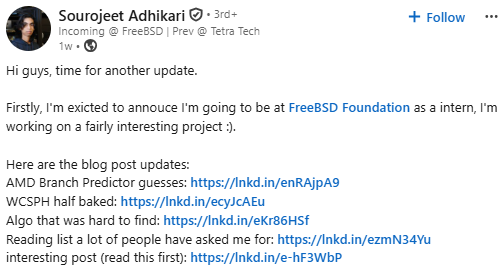

We’re excited to welcome another student contributor to the FreeBSD community.

Please join us in welcoming Sourojeet Adhikari, who recently shared that he’ll be joining the FreeBSD Foundation as an intern.

As part of his work, Sourojeet is currently exploring “Porting ROCm from Linux to FreeBSD”, an ambitious and highly technical project focused on GPU computing support.

RT @TeksEdge: 🚀 Neue MTP-Unterstützung für Strix Halo veröffentlicht!

mehr auf Arint.info

Arint - SEO+KI (@[email protected])

<p>RT @TeksEdge: 🚀 Neue MTP-Unterstützung für Strix Halo veröffentlicht!</p> <p><a href="https://arint.info/@Arint/116634638485149145">mehr</a> auf <a href="https://arint.info/">Arint.info</a></p> <p>#AI #AMD #MTP #Qwen #ROCm #StrixHalo #arint_info</p> <p><a href="https://x.com/TeksEdge/status/2058728175388262761#m">https://x.com/TeksEdge/status/2058728175388262761#m</a></p>

Pretraining large language models with MXFP4 on Native FP4 Hardware

40% Cost Gap Developer Cloud Vs On-Prem Instinct

A 40% cost advantage and instant ROCm performance show why AMD's developer cloud beats on‑prem Instinct setups. See the data and learn how to spin up a VM in minutes.

https://cloudplatform.digital/40-percent-cost-gap-developer-cloud-vs-on-prem-ins/

#developercloud #developercloudamd #instinct #rocm #clouddevelopertools

gihyo.jp

gihyo.jp