Sun on the wall around the windows.

Sonne an der Wand rund um die Fenster.

#Windows #Fenster #windowfriday #Fensterfreitag #Decoration #Colors #Farben #Urban #Stadt #Design #Architect #Building #noAI #keineKI #Fotografie #Photographie #Fotografia #Fotografía #Foto #Photo #Photography #Fairphone #FP4 #Dortmund #Ruhrpott

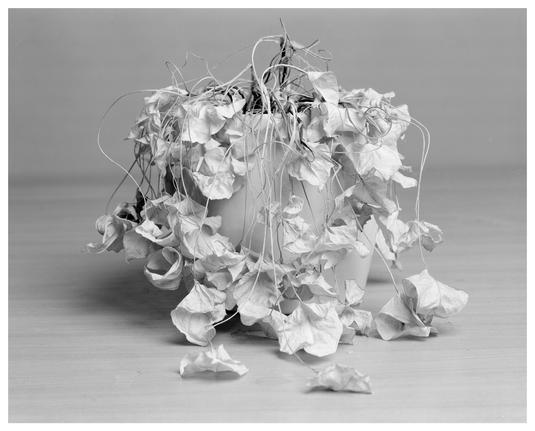

Dead plant

📷 Intrepid 4x5 mk5

🎞️ Ilford FP4+

🧪 Adox XT-3

#filmphotography #analogphotography #monochrome #4x5 #largeformat #believeinfilm #bwfilm #bwfilmphotography #Ilford #fp4 #fp4plus

📷 Intrepid 4x5 mk5

🎞️ Ilford FP4+

🧪 Adox XT-3

#filmphotography #analogphotography #monochrome #4x5 #largeformat #believeinfilm #bwfilm #bwfilmphotography #Ilford #fp4 #fp4plus

The joy of train travel, art and nature side by side.

Die Freude am Zugfahren, Art und Natur Seite an Seite.

#Tattoo #Rail #Zug #Train #Travel #Art #Nature #window #Fenster #windowfriday #Fensterfreitag #Fotovorschlag #Colors #Farben #Transport #Public #Trainspotting #noAI #keineKI #Fotografie #Photographie #Fotografia #Fotografía #Foto #Photo #Photography #Fairphone #FP4 #Dortmund #Ruhrpott

Die Freude am Zugfahren, Art und Natur Seite an Seite.

#Tattoo #Rail #Zug #Train #Travel #Art #Nature #window #Fenster #windowfriday #Fensterfreitag #Fotovorschlag #Colors #Farben #Transport #Public #Trainspotting #noAI #keineKI #Fotografie #Photographie #Fotografia #Fotografía #Foto #Photo #Photography #Fairphone #FP4 #Dortmund #Ruhrpott

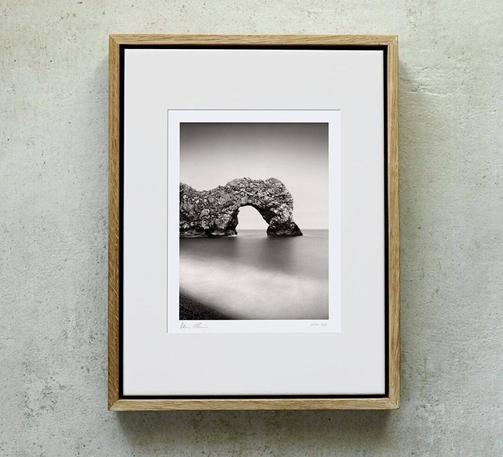

Durdle Door, Etude 1, Jurassic Coast, Dorset, England. August 2025. Ref-12018

https://www.denisolivier.com/photography/durdle-door-etude-1-jurassic-coast-dorset-england/en/12018

#jurassiccoast #ilfordfp4 #fp4 #sea #durdledoor #dorset #beach #rock #hc110 #manfrotto #heiland #england #nisi #kodakhc110 #coast

https://www.denisolivier.com/photography/durdle-door-etude-1-jurassic-coast-dorset-england/en/12018

#jurassiccoast #ilfordfp4 #fp4 #sea #durdledoor #dorset #beach #rock #hc110 #manfrotto #heiland #england #nisi #kodakhc110 #coast

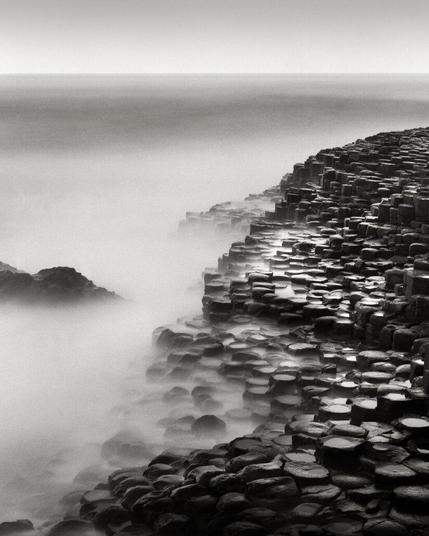

NEW! Daymark, Les Combots, France. April 2026. Ref-12108

https://www.denisolivier.com/photography/daymark-les-combots-france/en/12108

#beach #adoxxt3 #coastal #ilfordfp4 #lescombots #ducalbe #bunker #horizon #heiland #ocean #daymark #fp4 #rock #lagrandecote #atlantic

https://www.denisolivier.com/photography/daymark-les-combots-france/en/12108

#beach #adoxxt3 #coastal #ilfordfp4 #lescombots #ducalbe #bunker #horizon #heiland #ocean #daymark #fp4 #rock #lagrandecote #atlantic

Sightseeing im Boot

Brügge, 2025

📸 Pentax ME Super, SMC Pentax-M 1:2 50mm

🎞️ Ilford FP4 Plus

#brügge #brugge #bruges #belgien #belgie #belgique

#filmphotography #pentax #pentaxmesuper #ilford #fp4plus #fp4 #ilfordfp4plus #monochrome #blackandwhitephotography #photography #believeinfilm #filmisnotdead #analogphotography

Brügge, 2025

📸 Pentax ME Super, SMC Pentax-M 1:2 50mm

🎞️ Ilford FP4 Plus

#brügge #brugge #bruges #belgien #belgie #belgique

#filmphotography #pentax #pentaxmesuper #ilford #fp4plus #fp4 #ilfordfp4plus #monochrome #blackandwhitephotography #photography #believeinfilm #filmisnotdead #analogphotography

Not “The Ghost Train”

Nicht „Der Geisterzug“

#Station #Bahnhof #Rail #Zug #Train #Night #Nacht #Dark #Lights #BlackandWhite #blackandwhitephotography #Schwarzweiss #Photoennoiretblanc #BW #bnw #bnwphotography #BWPhotography #Monochrome #noAI #keineKI #Fotografie #Photographie #Fotografia #Fotografía #Foto #Photo #Photography #Fairphone #FP4 #Dortmund

Nicht „Der Geisterzug“

#Station #Bahnhof #Rail #Zug #Train #Night #Nacht #Dark #Lights #BlackandWhite #blackandwhitephotography #Schwarzweiss #Photoennoiretblanc #BW #bnw #bnwphotography #BWPhotography #Monochrome #noAI #keineKI #Fotografie #Photographie #Fotografia #Fotografía #Foto #Photo #Photography #Fairphone #FP4 #Dortmund