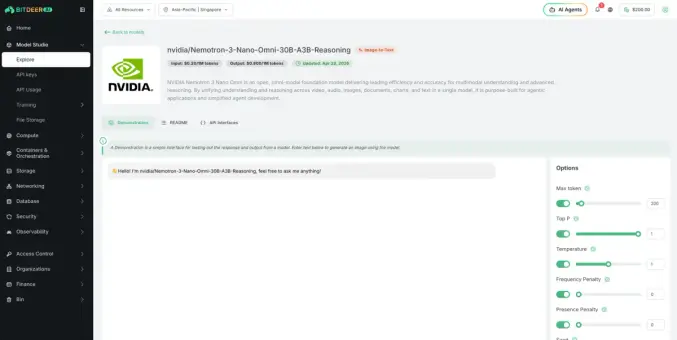

NVIDIA Unveils "Nemotron 3 Nano Omni," Merging Vision, Audio, and Language for AI Agents

NVIDIA's Nemotron 3 Nano Omni is a new AI model that combines vision, audio, and language. It helps AI agents work faster and understand more.

#NvidiaAI, #Nemotron3, #MultimodalAI, #OpenSourceAI, #AIAgents

https://newsletter.tf/nvidia-nemotron-3-nano-omni-ai-model-vision-audio/