A $1,999 Mac mini vs. a $4,000 Windows workstation for local AI — and the Mac wins. Here's why.

Most people running AI today are using cloud APIs — ChatGPT, Claude, Gemini. The model lives on someone else's server, and your device barely works up a sweat. That's still the dominant model, and it works well.

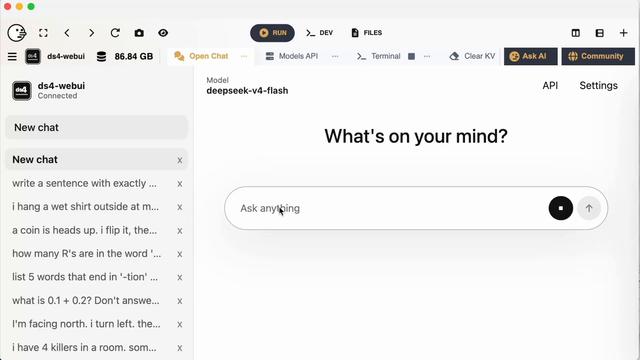

But a growing number of developers and businesses are experimenting with something different: running the language model locally, on their own hardware. Always-on, private, no per-token cost.

When you go down that path, the hardware question gets interesting fast.

On a conventional Windows workstation, the CPU and GPU have separate memory pools. A 70B parameter model needs ~40 GB just to load — more than most consumer GPUs have. So you either cap out at smaller models, or spend $6,000+ on a professional GPU card.

Apple Silicon does it differently. The M4 Pro chip puts the CPU, GPU, and Neural Engine on the same die, sharing one unified memory pool. A 48 GB Mac mini hands the full 48 GB to its GPU cores directly — no transfer, no bottleneck, no VRAM ceiling.

Result: a $1,999 Mac mini runs Llama 3.1 70B. A $3,500 Windows workstation with an RTX 4090 cannot.

We wrote up the full comparison — including where Windows workstations still win (CUDA toolchains, training, high-concurrency serving) and a practical hardware decision guide for 2026.

https://www.buysellram.com/blog/why-mac-mini-is-the-surprising-frontrunner-for-local-ai-agents/#LocalAI #MacMini #AppleSilicon #AIAgents #LLM #AIInfrastructure #OpenSource #Ollama #AIHardware #TechForBusiness